GIGABYTE Solutions for NVIDIA Rubin Platform

GIGABYTE solutions usher in a new era of Agentic AI, built on the NVIDIA Rubin platform to accelerate intelligent reasoning, autonomous decision-making, and scalable AI infrastructure.

The Expert in Agentic AI and AI Reasoning

The era of agentic AI, a frequently discussed future, is now finally within reach. With the NVIDIA Rubin platform, purpose-built for agentic AI and reasoning, GIGABYTE strives to deliver the most efficient solutions across industries, supporting a wide range of deployment scenarios.

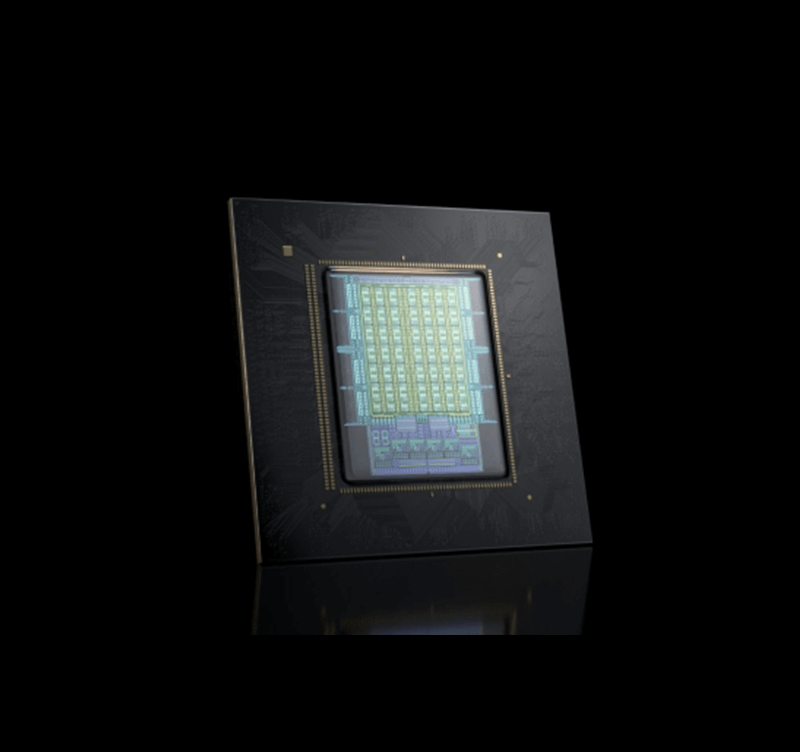

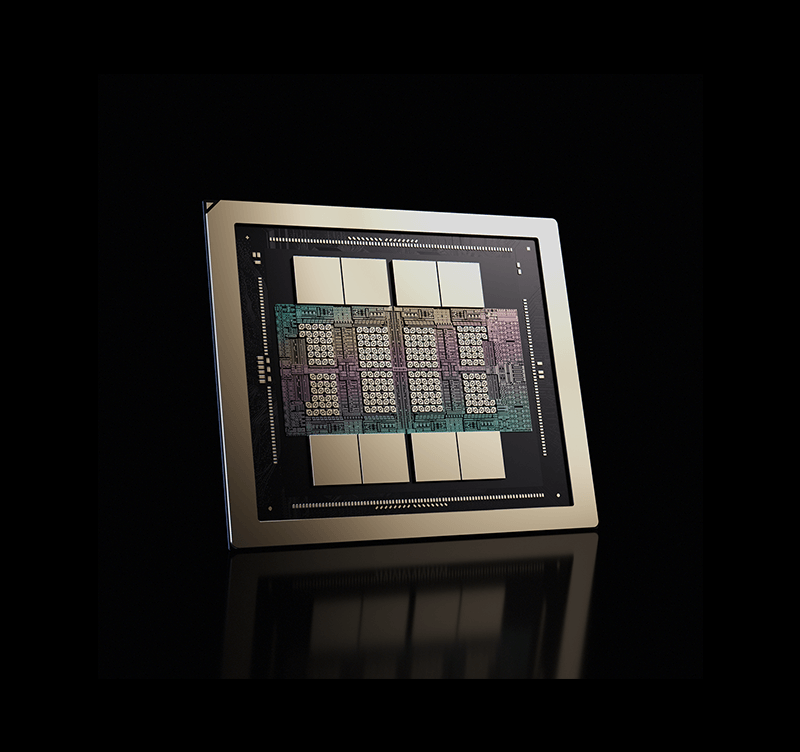

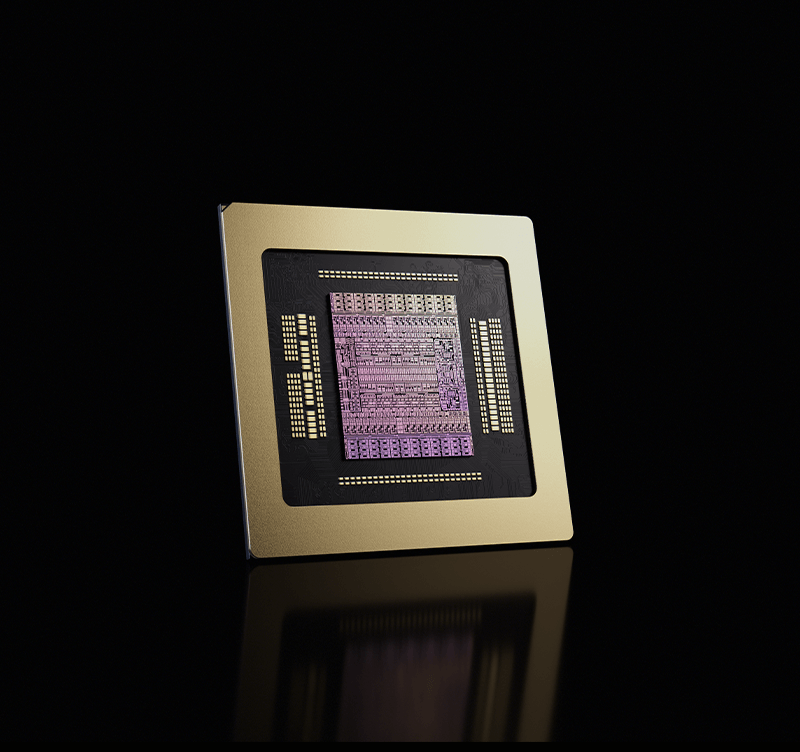

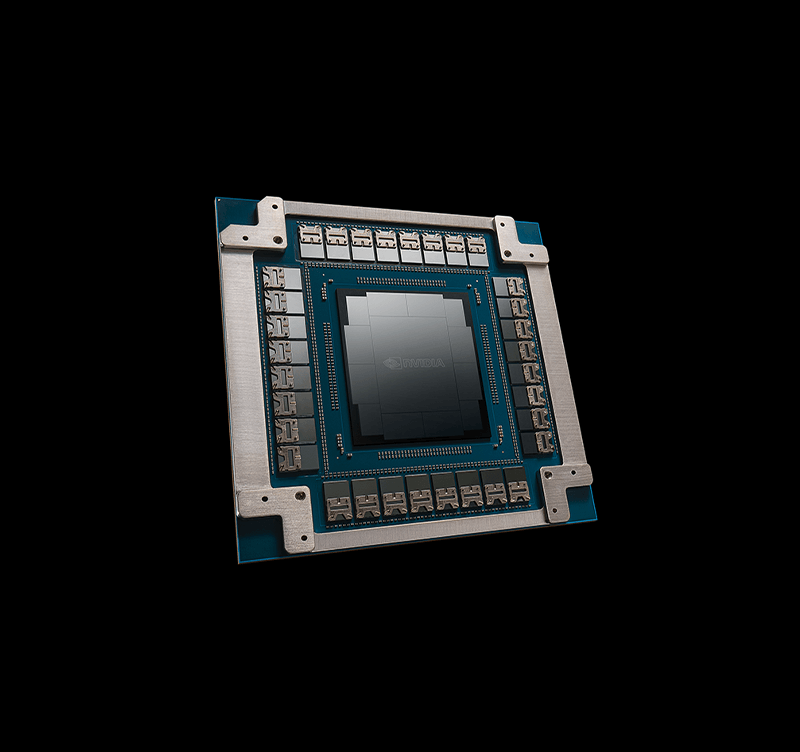

The NVIDIA Rubin platform goes far beyond a simple GPU upgrade. It introduces six new chips, covering CPU, GPU, NVLink Switch, DPU, NIC, and Ethernet switch, accelerating every aspect of AI computation.

The Six New Chips

NVLink 6 Switch

The scale-up fabric that tightly couples accelerators with uniform latency and sustained bandwidth.

ConnectX-9 SuperNIC

Delivers predictable scale-out performance while enforcing traffic isolation and secure operation.

BlueField-4 DPU

The software-defined control plane which enforces security, isolation, and operational determinism independently of AI computation.

Spectrum-6 Ethernet Switch

Purpose-built Ethernet fabric engineered for highly synchronized, bursty, and asymmetric AI traffic with co-packaged optics.

The Five Generational Breakthroughs

6th Gen NVLink & NVLink Switch

3.6 TB/s bandwidth per GPU for bandwidth-intensive applications like AI inference, featuring NVIDIA® SHARP™.

Vera CPU

Combines 88 NVIDIA-designed cores, up to 1.2 TB/s of LPDDR5X memory bandwidth, and Scalable Coherency Fabric.

3rd Gen Transformer Engine

Enables up to 50 PetaFLOPS NVFP4 for inference with new hardware-accelerated adaptive compression.

3rd Gen Confidential Computing

The world's first rack-scale confidential computing across CPU, GPU, and NVLink™ domains.

2nd Gen RAS Engine

Enables continuous in-system health monitoring, self-testing, and SRAM repair.

NVIDIA Vera Rubin NVL72

Unmatched Dense Performance

Delivers extreme GPU density in a single rack, enabling massive performance for trillion-parameter AI models and large-scale training workloads.

Designed for Next-Generation AI Factories

Built specifically for AI training efficiency and inference cost reduction. Tuned for large models and high throughput, providing maximum efficiency where every millisecond counts.

Integrated, Fully Engineered System

Comes as a cohesively engineered rack system, including custom cooling, power distribution, and networking, enabling rapid, cable-free deployment at scale.

Specifications1

| NVFP4 Inference | 3,600 PFLOPS |

| NVFP4 Training2 | 2,520 PFLOPS |

| FP8 / FP6 Training2 | 1,260 PFLOPS |

| INT82 | 18 POPS |

| FP16 / BF162 | 288 PFLOPS |

| TF322 | 144 PFLOPS |

| FP32 | 9,360 TFLOPS |

| FP64 | 2,400 TFLOPS |

| FP32 SGEMM3 | 28,800 TFLOPS |

| FP64 DGEMM3 | 14,400 TFLOPS |

| GPU Memory | 20.7 TB HBM4 |

| Bandwidth | 1,580 TB/s |

| NVLink Bandwidth | 260 TB/s |

| NVLink-C2C Bandwidth | 65 TB/s |

1 All values are up to and subject to change.

2 Dense specification.

3 Peak performance using Tensor Core-based emulation algorithms.

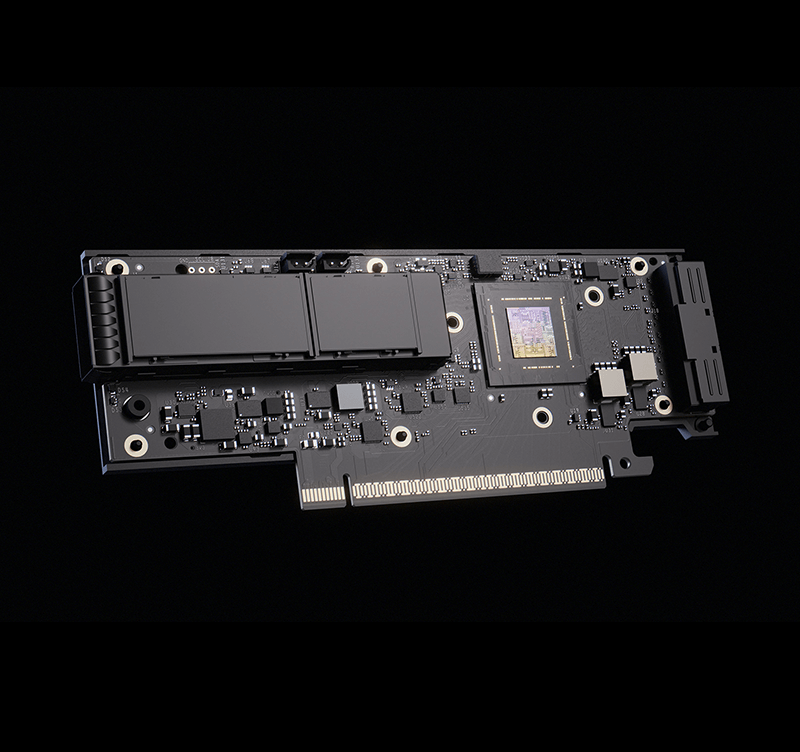

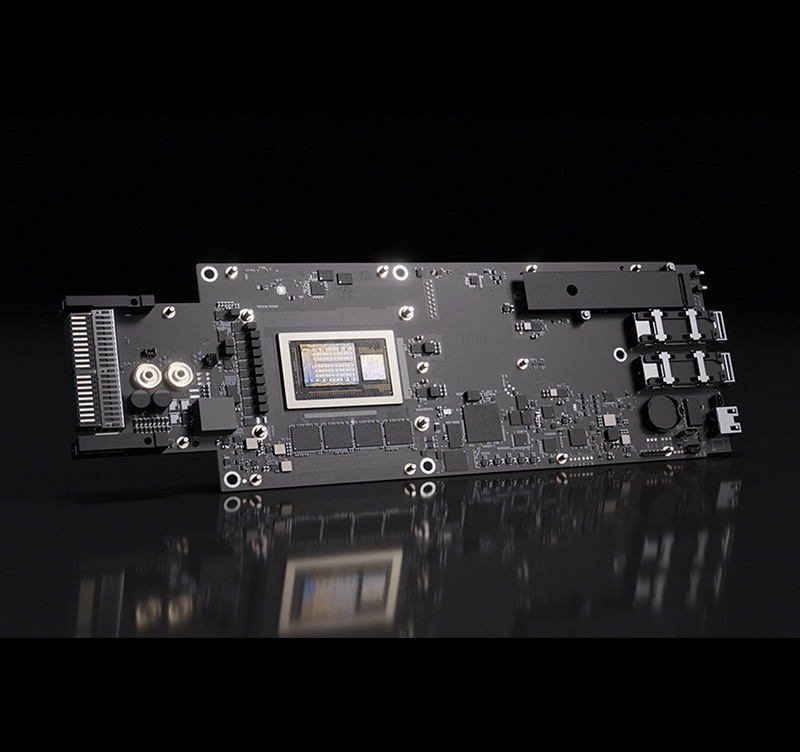

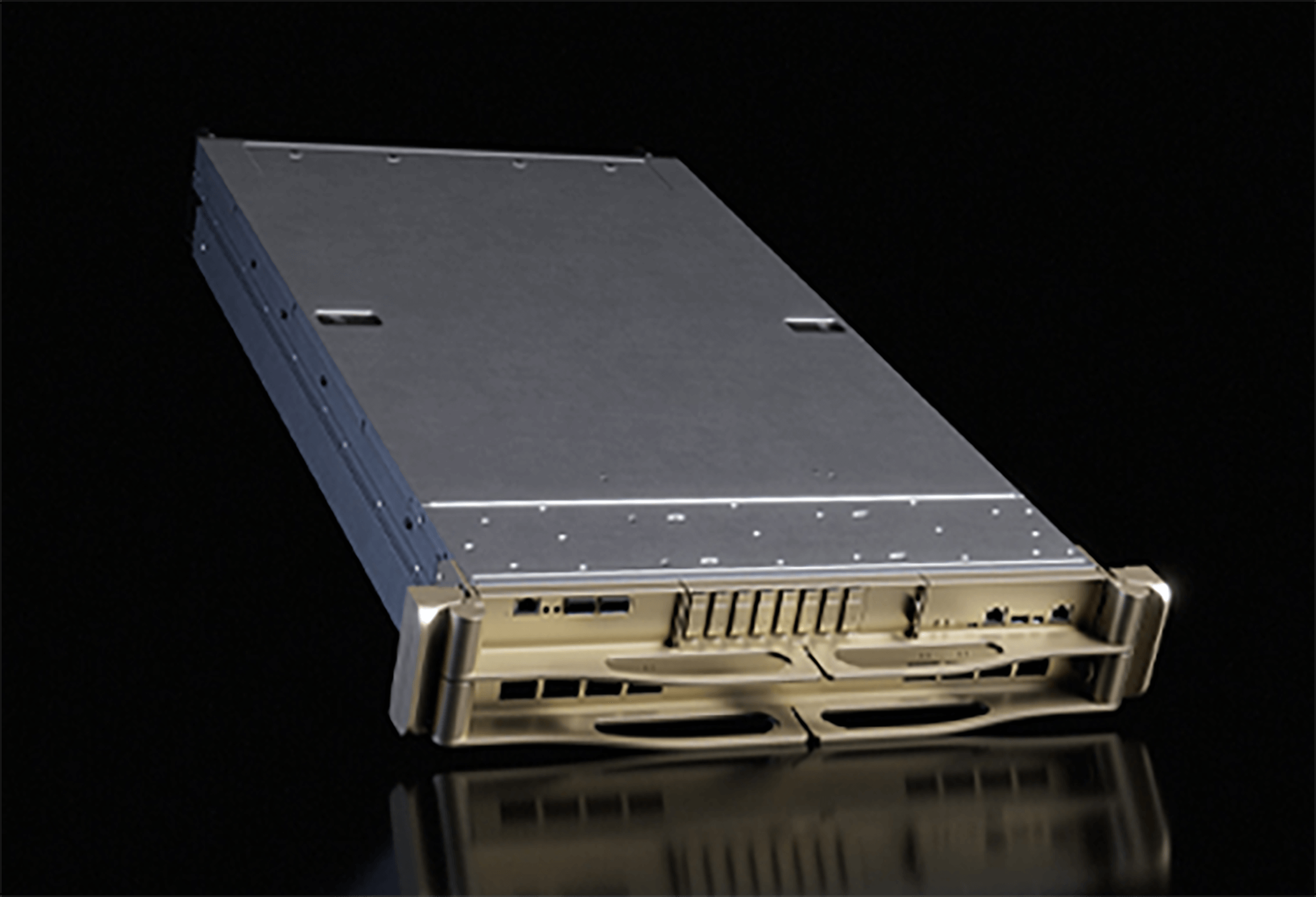

NVIDIA Rubin NVL8

Flexible Scaling for Any Deployment

Scale from single-node to multi-node GPU clusters without committing to a full rack-scale architecture. Ideal for phased expansion and mixed AI workloads.

Lower Infrastructure Requirements

Fits into more standard server and rack environments, reducing the need for specialized power, cooling, and facility redesign.

Broader Platform Compatibility

Supports a wider range of configurations, networking choices, and workload types. Fits perfectly into enterprises running everything from AI training to inference and HPC.

| GPU | 8x NVIDIA Rubin GPUs |

| Total GPU Memory | Bandwidth | 2.3 TB | 160 TB/s |

| CPU | 2x Intel® Xeon® 6 processors |

| NVIDIA NVLink Switch System | 4x |

| NVIDIA NVLink Bandwidth | 28.8 TB/s total bandwidth |

| Networking | 8x OSFP ports serving 8x single-port NVIDIA ConnectX®-9 VPI - up to 800 Gb/s NVIDIA InfiniBand and Ethernet 2x 400G QSP112 NVIDIA BlueField®-4 DPUs - up to 800 Gb/s NVIDIA InfiniBand and Ethernet |

Why GIGABYTE ?

Short TTM for Agile Deployment

Flexible Scalability for Diverse Scenarios

One-Stop Deployment for Zero Hassle

Unified Management for Easy Maintenance

Strong Partnership for All-Round Support

Extensive Global Experience for Maximum Flexibility

GIGAPOD - One-Stop Scalable Solutions

At GIGABYTE, we offer GIGAPOD, a solution that scales from a single rack to POD-scale and containerized data center, with power, cabling, cooling, and all infrastructure carefully designed and evaluated. Providing a simple, one-step, pain-free adoption of AI data centers. Learn more about GIGAPOD

Ready-to-Deploy Software Ecosystem

Accelerate time-to-value with a flexible, pre-validated software stack. From single-node management to POD-scale orchestration, it delivers seamless deployment and adaptable control for any workload.