AMD Instinct™ MI350 Series Platform

Leadership Performance, Cost Efficient, Fully Open-Source

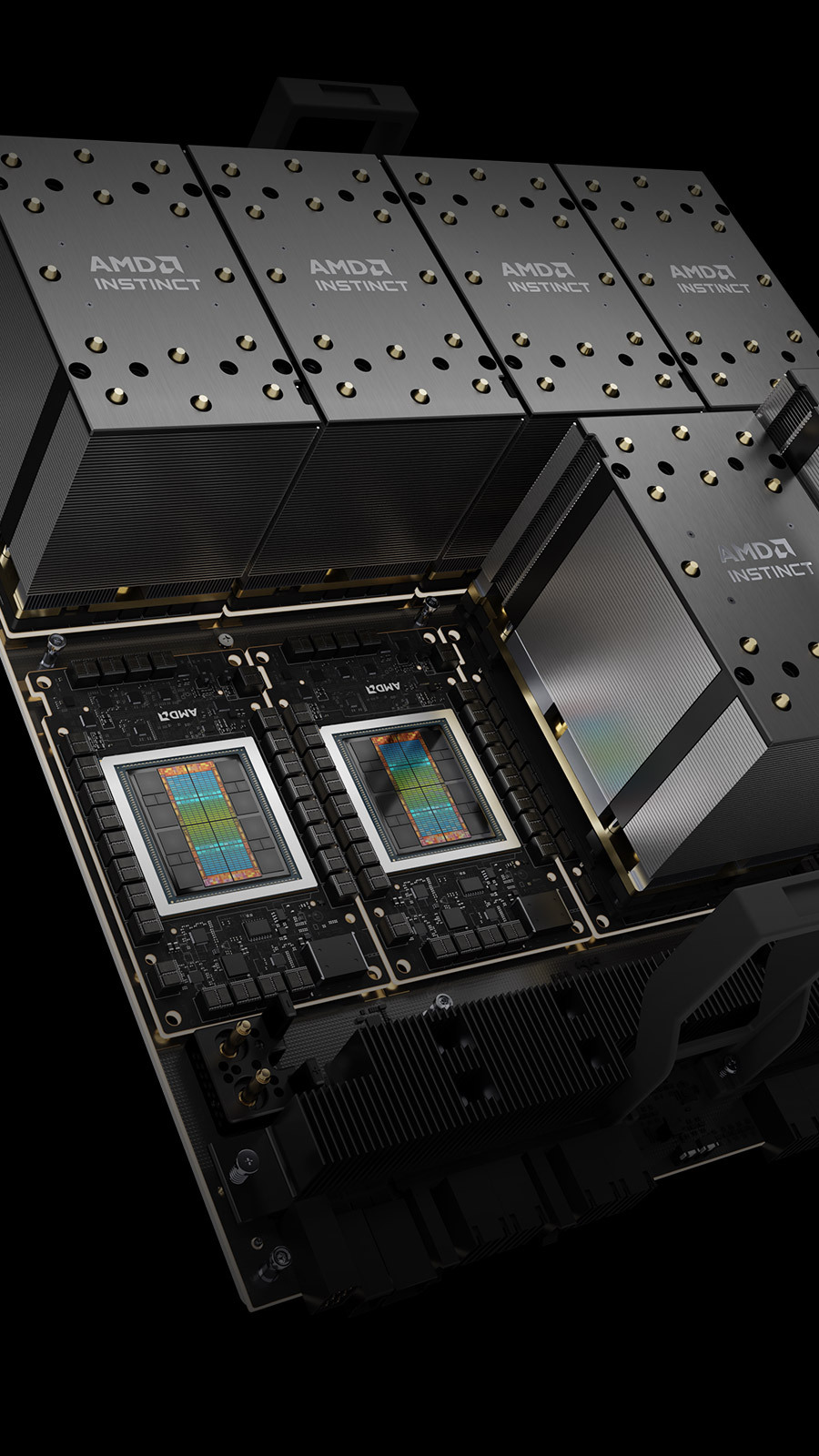

AMD Instinct™ MI350 Series

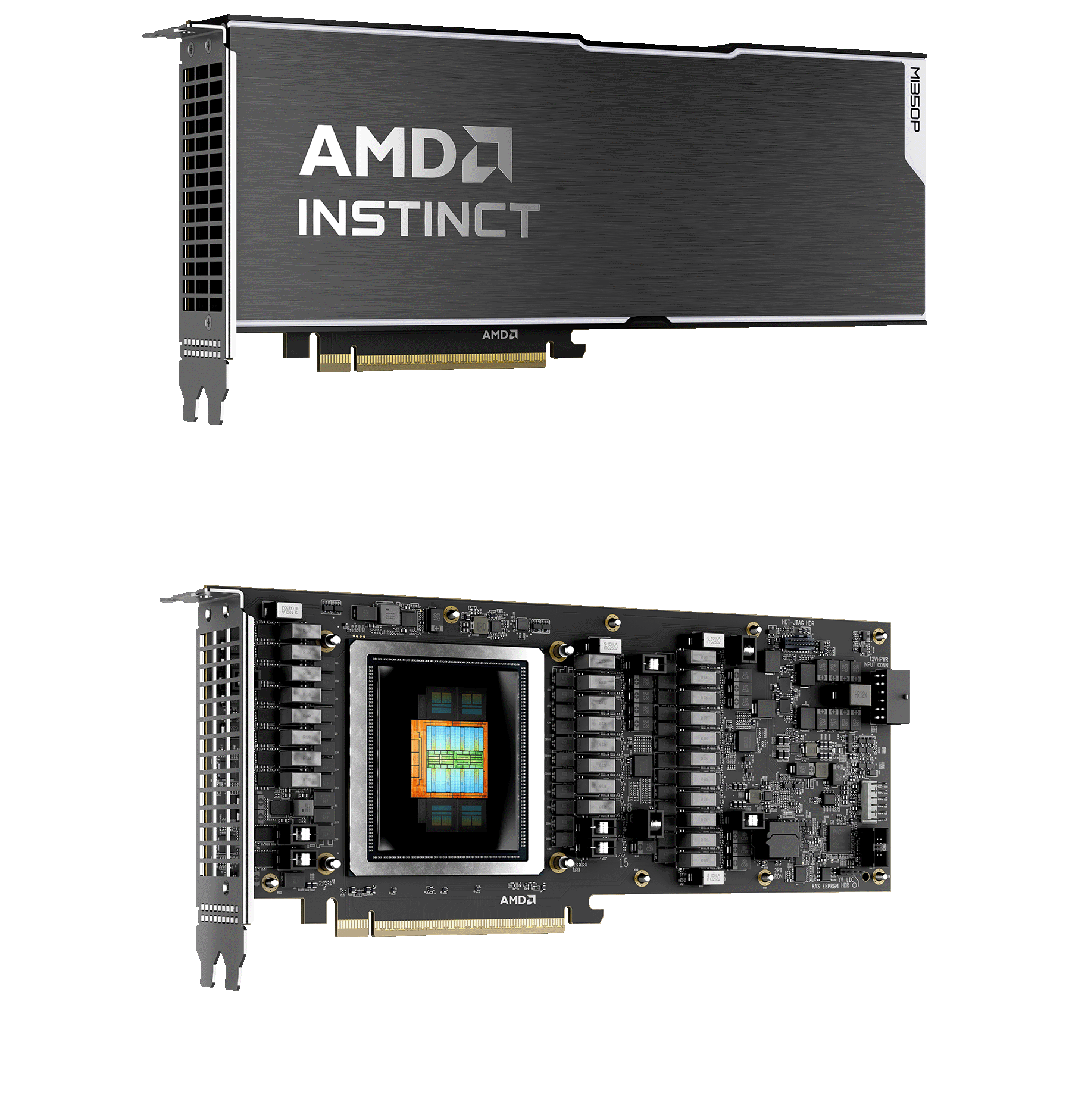

Overview for the PCIe Card or Module

| Feature | MI350P |

|---|---|

| Form Factor | Dual-slot PCIe CEM |

| GPU Architecture | AMD CDNA™ 4 |

| GPU Compute Units | 128 |

| INT8 / INT8 (Sparsity) | Supported (w/ sparsity 2:4) |

| FP8 | Supported (w/ sparsity 2:4) |

| FP16, BF16 | Supported (w/ sparsity 2:4) |

| MXFP4 | Supported |

| FP64 | Supported |

| Dedicated Memory Size | 144 GB HBM3E |

| Memory Bandwidth | 4.0 TB/s |

| Bus Interface | PCIe Gen5 x16 |

| Cooling | Passive |

| Power Connector | 16-pin 12VHPWR |

| TBP | 600W (450W configurable) |

| Virtualization Support | Up to 4 partitions |

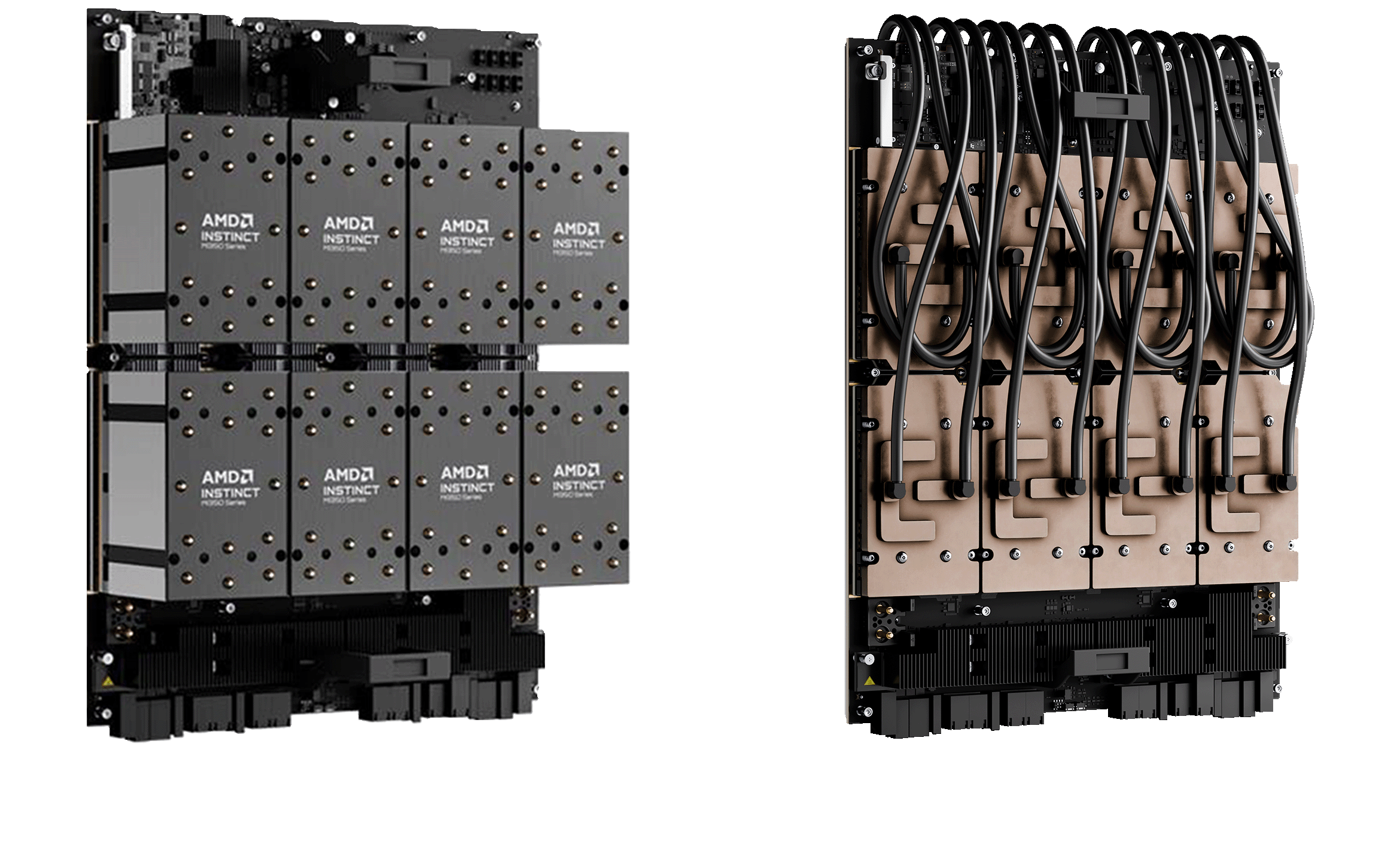

AMD Instinct | |||

|---|---|---|---|

MI355X GPU | MI350X GPU | Model | MI325X GPU |

| TSMC N3P / TSMC N6 | Process Technology (XCD / IOD) | TSMC N5 / TSMC N6 | |

| AMD CDNA4 | GPU Architecture | AMD CDNA3 | |

| 256 | GPU Compute Units | 304 | |

| 16,384 | Stream Processors | 19,456 | |

| 185 Billion | Transistor Count | 153 Billion | |

| 10.1 PFLOPS | 9.2 PFLOPS | MXFP4 / MXFP6 | N/A |

| 5.0 / 10.1 POPS | 4.6 / 9.2 POPS | INT8 / INT8 (Sparsity) | 2.6 / 5.2 POPS |

| 78.6 TFLOPS | 72.1 TFLOPS | FP64 (Vector) | 81.7 TFLOPS |

| 5.0 / 10.1 PFLOPS | 4.6 / 9.2 PFLOPS | FP8 / OCP-FP8 (Sparsity) | 2.6 / 5.2 PFLOPS |

| 2.5 / 5.0 PFLOPS | 2.3 / 4.6 PFLOPS | BF16 / BF16 (Sparsity) | 1.3 / 2.6 PFLOPS |

| 288 GB HBM3E | Dedicated Memory Size | 256 GB HBM3E | |

| 8 TB/s | Memory Bandwidth | 6 TB/s | |

| PCIe Gen5 x16 | Bus Interface | PCIe Gen5 x16 | |

Passive & Liquid | Passive | Cooling | Passive & Liquid |

| 1400W | 1000W | Maximum TDP/TBP | 1000W |

| Up to 8 partitions | Virtualization Support | Up to 8 partitions | |

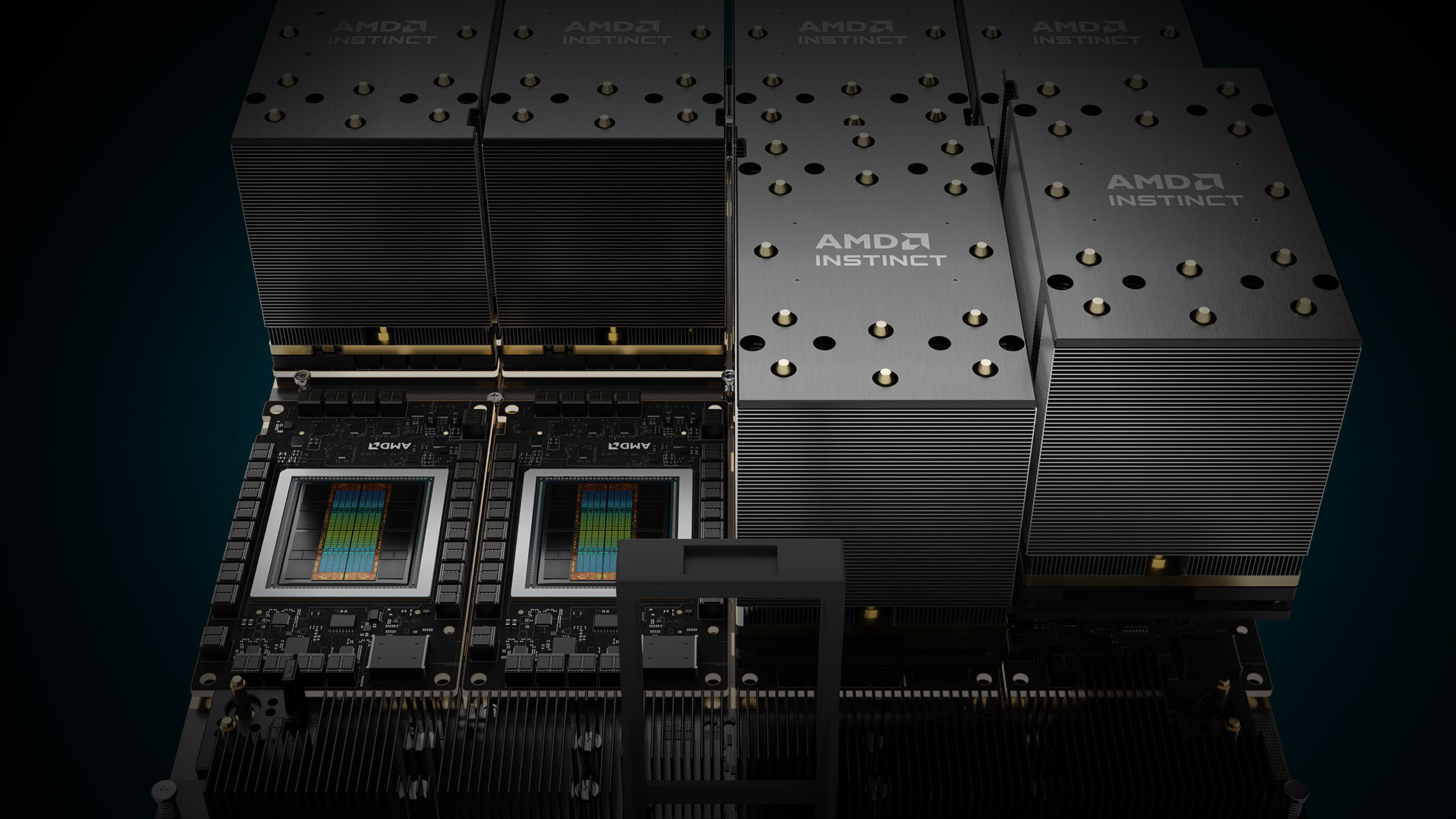

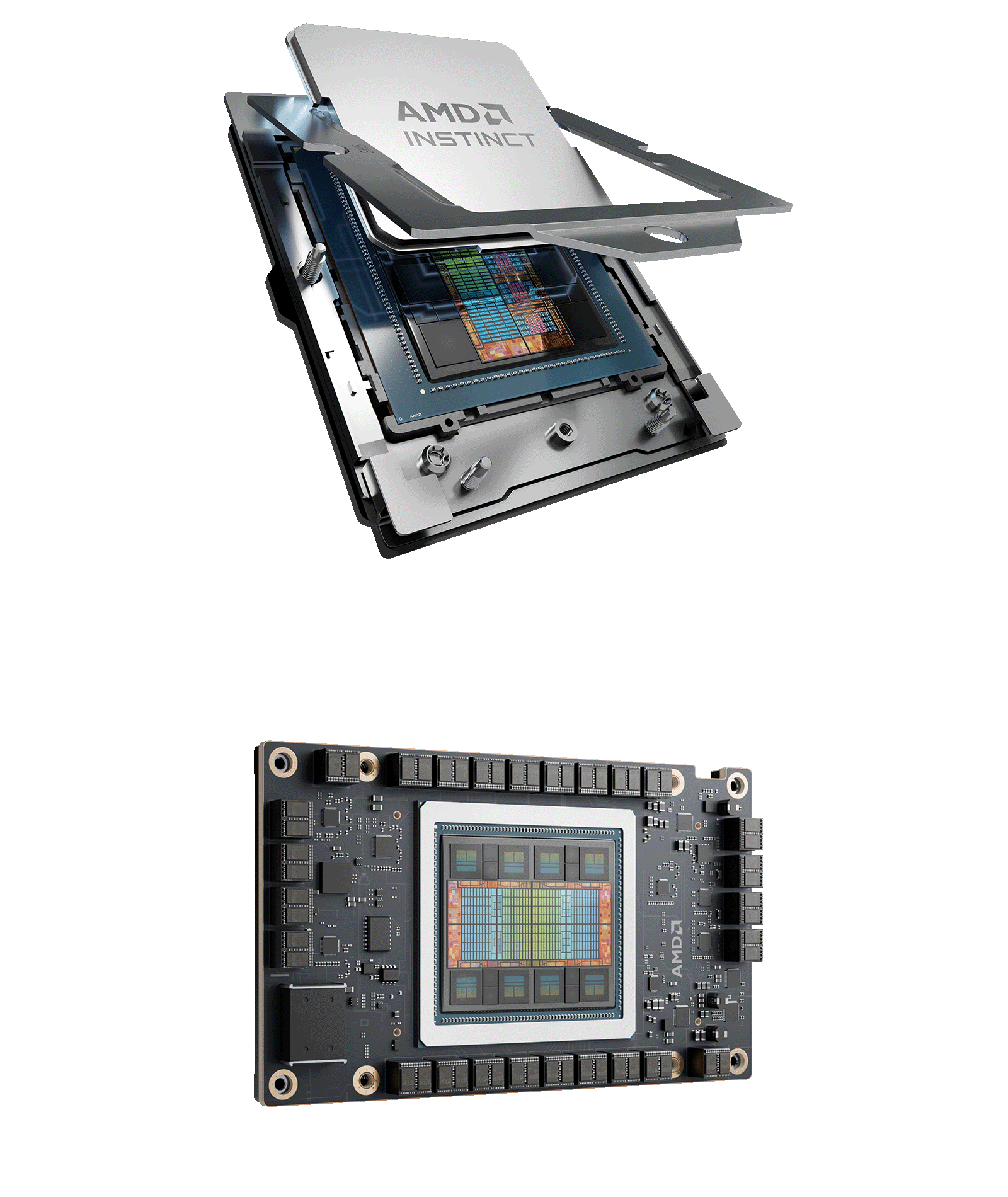

AMD Instinct™ MI300 Series

Accelerators for the Exascale Era

- Designed for the most demanding workloads, the AMD Instinct MI325X GPU delivers 256GB of memory and 6 TB/s bandwidth, combining exceptional performance with enhanced power efficiency and support for matrix sparsity to optimize AI training and inference.

- The world's first unified data center APU, AMD Instinct MI300A, breaks through performance bottlenecks between CPU and GPU, eliminating programming overhead and simplifying data management.

- Powered by AMD EPYC™ processors and AMD Instinct™ GPUs and APUs, the world’s fastest supercomputers, El Capitan and Frontier, demonstrate outstanding performance and energy efficiency on both the TOP500 and GREEN500 lists, proving AMD's leadership in HPC and AI acceleration.

| MI325X GPU | MI300X GPU | Model | MI300A APU |

|---|---|---|---|

| OAM module | Form Factor | APU SH5 socket | |

| - | AMD ‘Zen 4’ CPU cores | 24 | |

| 304 | GPU Compute Units | 228 | |

| 19,456 | Stream Processors | 14,592 | |

| 163.4 TFLOPS | Peak FP64/FP32 Matrix* | 122.6 TFLOPS | |

| 81.7/163.4 TFLOPS | Peak FP64/FP32 Vector* | 61.3/122.6 TFLOPS | |

| 1307.4 TFLOPS | Peak FP16/BF16* | 980.6 TFLOPS | |

| 2614.9 TFLOPS | Peak FP8* | 1961.2 TFLOPS | |

| 256 GB HBM3E | 192 GB HBM3 | Dedicated Memory Size | 128 GB HBM3 |

| 6.0 GHz | 5.2 GHz | Memory Clock | 5.2 GHz |

| 6 TB/s | 5.3 TB/s | Memory Bandwidth | 5.3 TB/s |

| PCIe Gen5 x16 | Bus Interface | PCIe Gen5 x16 | |

| 8 | Infinity Fabric™ Links | 8 | |

| 1000W | 750W | Maximum TDP/TBP | 550W / 760W (Peak) |

| Up to 8 partitions | Virtualization Support | Up to 3 partitions | |

*Indicates not with sparsity

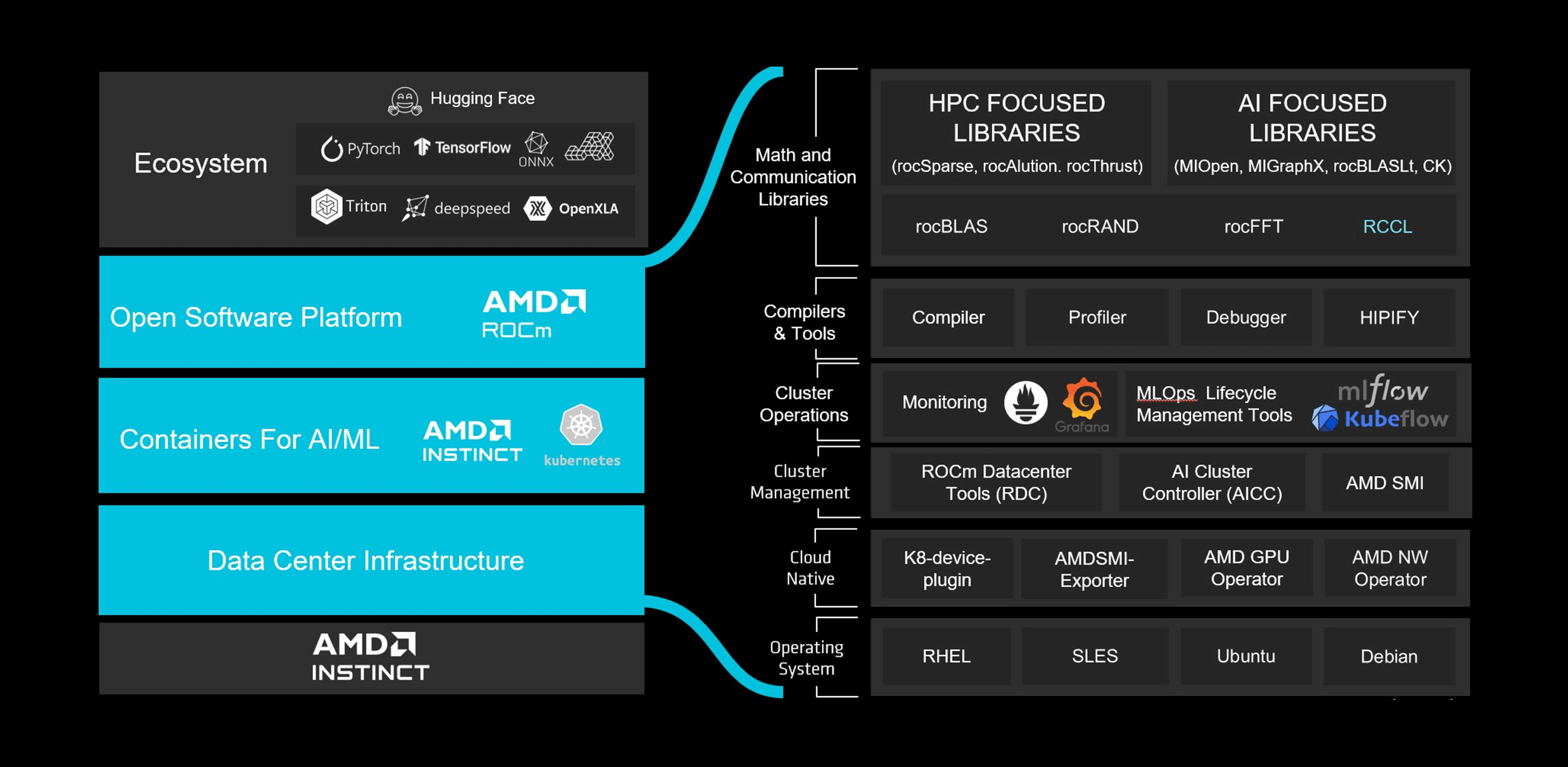

Optimize Next Gen Innovation with AMD ROCm™ 7.0

|

|

[1] (MI300-080): Testing by AMD as of May 15, 2025, measuring the inference performance in tokens per second (TPS) of AMD ROCm 6.x software, vLLM 0.3.3 vs. AMD ROCm 7.0 preview version SW, vLLM 0.8.5 on a system with (8) AMD Instinct MI300X GPUs running Llama 3.1-70B (TP2), Qwen 72B (TP2), and Deepseek-R1 (FP16) models with batch sizes of 1-256 and sequence lengths of 128-204. Stated performance uplift is expressed as the average TPS over the (3) LLMs tested. Results may vary.

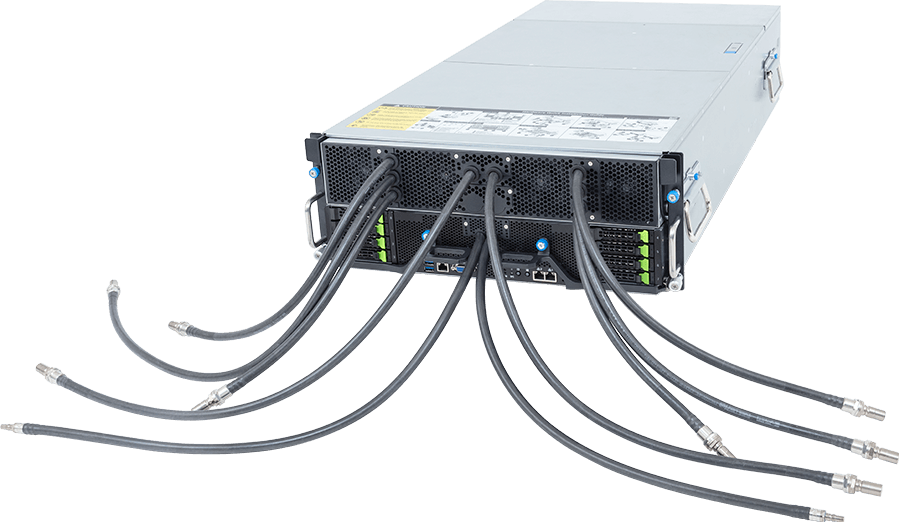

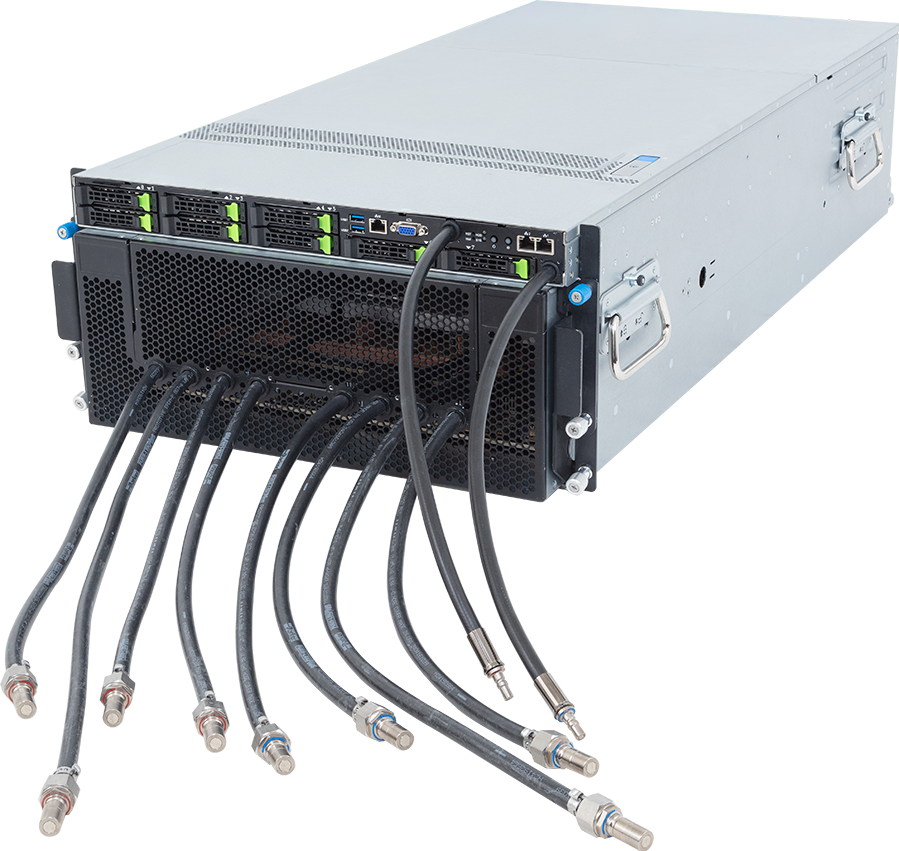

Select GIGABYTE for the AMD Instinct™ Platform

Compute Dense

High Performance

Scale-out

Advanced Cooling

Energy Efficiency

Applications for AMD Instinct™ Solutions

AI Inference

Generative AI

Agentic AI

HPC

Featured New Products

Resources