釋放具高吞吐量與驚人運算效能的整合式人工智慧資料中心解決方案

One of the most important considerations when planning a new AI data center is the selection of hardware, and in this AI era, many companies see the choice of the GPU/Accelerator as the foundation. Each of GIGABYTE’s industry leading GPU partners (AMD, Intel, and NVIDIA) has innovated uniquely advanced products built by a team of visionary and passionate researchers and engineers, and as each team is unique, each new generational GPU technology has advances that make it ideal for particular customers and applications. This consideration of which GPU to build from is mostly based on factors: performance (AI training or inference), cost, availability, ecosystems, scalability, efficiency, and more. The decision isn’t easy, but GIGABYTE aims to provide choices, customization options, and the know-how to create ideal data centers to tackle the demand and increasing parameters in AI/ML models.

NVIDIA HGX™ H200/B100/B200

Biggest AI Software Ecosystem

Fastest GPU-to-GPU Interconnect

AMD Instinct™ MI300X

Largest & Fastest Memory

Intel® Gaudi®

Excellence in AI Inference

為何需要GIGA POD整合式解決方案?

-

資源整合力

透過與技術合作夥伴密切合作,確保能迅速達成客戶的要求和時間安排。

-

多樣的產品組合

擁有豐富多樣高密度算力的GPU伺服器,可為用戶量身定製。

-

高度擴展性

提供高度靈活性與未來擴展可能。

-

高效能運算

從單一GPU 伺服器到叢集資料中心,技嘉透過優化散熱設計或導入液體冷卻方案以確保提供頂尖運算力。

-

專業知識與服務

具備部署大型人工智慧資料中心專業知識,提供從諮詢到建置佈署的一條龍服務。

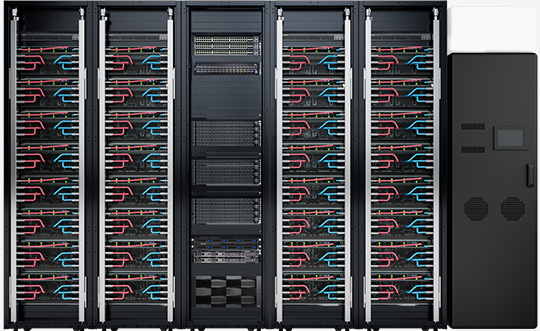

人工智慧運算於資料中心的未來

GIGABYTE enterprise products not only excel at reliability, availability, and serviceability. They also shine in flexibility, whether it be the choice of GPU, rack dimensions, or cooling method and more. GIGABYTE is familiar with every imaginable type of IT infrastructure, hardware, and scale of data center. Many GIGABYTE customers decide on the rack configuration based on how much power their facility can provide to the IT hardware, as well as considering how much floor space is available. So, this is why the service, GIGA POD, came to be. Customers have choices. Starting with how the components are cooled and how the heat is removed, customers can select either traditional air-cooling or direct liquid cooling (DLC).

1 2| Ver. | GPUs Supported | GPU Server (Form Factor) |

GPU Servers per Rack |

Rack | Power Consumption per Rack |

RDHx | |

|---|---|---|---|---|---|---|---|

| 1 |  |

NVIDIA HGX™ H100/H200/B100 AMD Instinct™ MI300X |

5U | 4 | 9 x 42U | 50kW | No |

| 2 |  |

NVIDIA HGX™ H100/H200/B100 AMD Instinct™ MI300X |

5U | 4 | 9 x 48U | 50kW | Yes |

| 3 |  |

NVIDIA HGX™ H100/H200/B100 AMD Instinct™ MI300X |

5U | 8 | 5 x 48U | 100kW | Yes |

| 4 |  |

NVIDIA HGX™ B200/H200 | 8U | 4 | 9 x 42U | 130kW | No |

| 5 |  |

NVIDIA HGX™ B200/H200 | 8U | 4 | 9 x 48U | 130kW | No |

| Ver. | GPUs Supported | GPU Server (Form Factor) |

GPU Servers per Rack |

Rack | Power Consumption per Rack |

CDU | |

|---|---|---|---|---|---|---|---|

| 1 |  |

NVIDIA HGX™ H100/H200/B100 AMD Instinct™ MI300X |

5U | 8 | 5 x 48U | 100kW | In-rack |

| 2 |  |

NVIDIA HGX™ H100/H200/B100 AMD Instinct™ MI300X |

5U | 8 | 5 x 48U | 100kW | External |

| 3 |  |

NVIDIA HGX™ B200/H200 | 4U | 8 | 5 x 42U | 130kW | In-rack |

| 4 |  |

NVIDIA HGX™ B200/H200 | 4U | 8 | 5 x 42U | 130kW | External |

| 5 |  |

NVIDIA HGX™ B200/H200 | 4U | 8 | 5 x 48U | 130kW | In-rack |

| 6 |  |

NVIDIA HGX™ B200/H200 | 4U | 8 | 5 x 48U | 130kW | External |

人工智慧資料中心的應用

精選產品

Strong Top to Bottom Ecosystem for Success