March 18, 2026 - Giga Computing, a subsidiary of GIGABYTE Technology and a leader in accelerated computing and infrastructure solutions, today announced it will showcase its latest AI infrastructure portfolio covering edge AI, enterprise AI development, and rack-scale AI factories at CloudFest 2026, taking place in Rust, Germany. Visitors can experience these innovations at Booth E03.

At the event, GIGABYTE will demonstrate how integrated compute, networking, and advanced thermal engineering enable cloud providers, hosting companies, and enterprises to deploy and scale AI services efficiently.

As demand for generative AI, digital twins, and intelligent cloud services continues to grow, infrastructure must deliver both extreme performance and operational efficiency. GIGABYTE addresses these needs with a comprehensive portfolio designed for AI factories, supercomputing, cloud hosting, and edge AI deployments.

Built on accelerator technologies from NVIDIA and AMD, GIGABYTE’s platforms integrate advanced GPU acceleration, high-speed networking, and optimized cooling architectures to support next-generation AI workloads at scale.

Overview of The Must See at GIGABYTE Booth #E03:

Featured NVIDIA-Accelerated Solutions

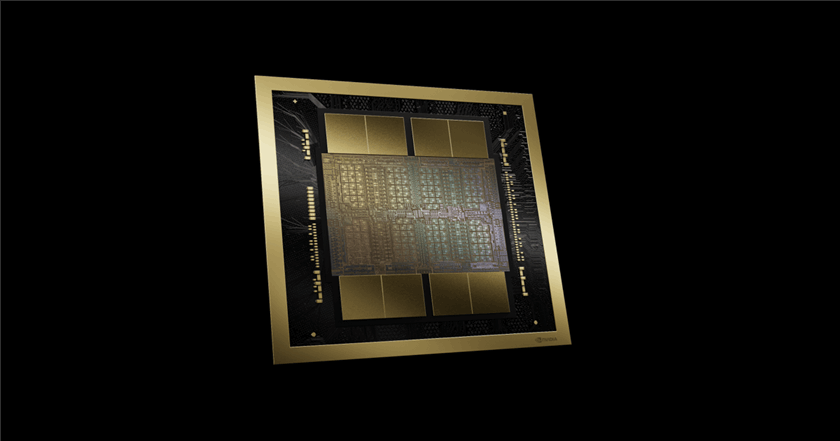

At CloudFest, GIGABYTE will showcase a range of NVIDIA-based AI platforms designed to support applications from edge inference to large-scale AI training.

- AI TOP ATOM

Mini AI supercomputer powered by NVIDIA GB10 Grace Blackwell Superchip and the NVIDIA AI software stack. Supports models with up to 200 billion parameters and delivers up to 1 petaFLOP of FP4 AI performance. Enables low-latency AI inference and private AI development at the edge. - W775-V10 AI Workstation

Powered by the NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip, this liquid-cooled system delivers up to 20 petaFLOPS of FP4 compute performance and supports AI models with up to one trillion parameters, bringing datacenter-class capabilities to workstation environments.

GIGABYTE and NVIDIA are working together on NVIDIA NemoClaw — an open source stack that simplifies running OpenClaw always-on assistants, more safely, with a single command. As part of the NVIDIA Agent Toolkit, it installs the NVIDIA OpenShell runtime — a secure environment for running autonomous agents, and open-source models like NVIDIA Nemotron.

As desktop AI compute becomes increasingly capable, NVIDIA NemoClaw enables a new class of always-on AI agents that run directly on local hardware. Use cases include personal research assistants, workflow automation agents, and private coding assistants. NemoClaw simplifies deployment of these intelligent agents for individuals and small teams, reducing reliance on enterprise scale cloud infrastructure, keeping data private and allowing full control over AI workloads. GIGABYTE's personal AI supercomputers provide the desktop compute foundation to support the growing shift towards agentic AI.

- XL44-SX2-AAS1

Designed for digital twin and high-availability operations, this system integrates RTX PRO™ 6000 Blackwell GPU accelerators with the NVIDIA ConnectX®-8 SuperNIC to deliver high-performance networking and real-time simulation capabilities. - G4L3-SD1 Rack Solution

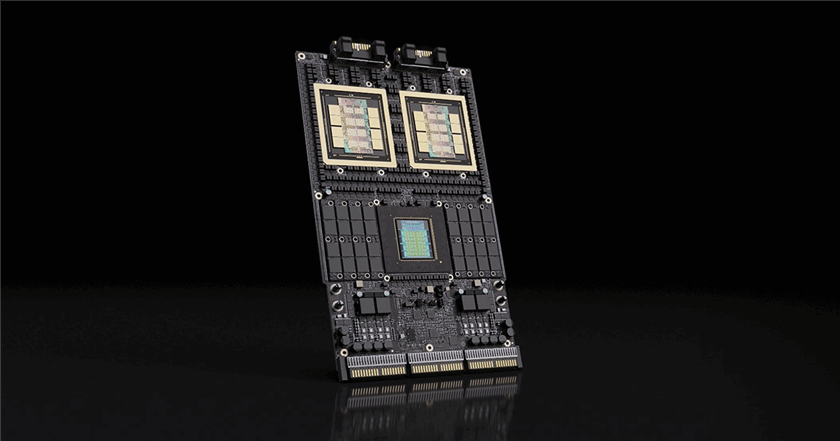

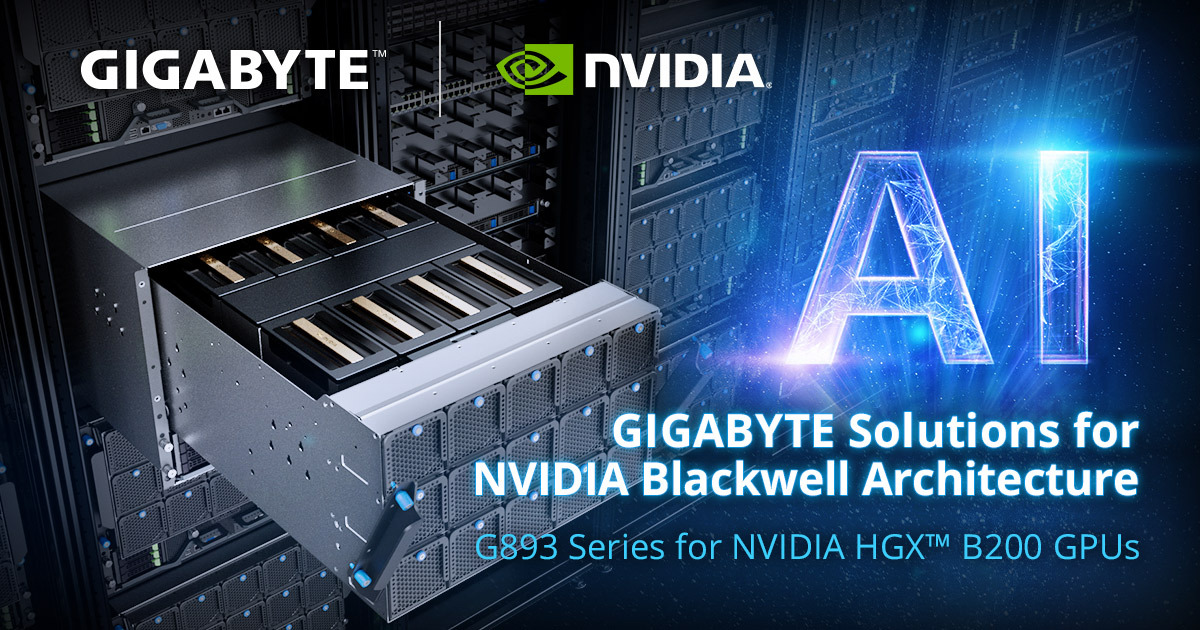

A powerful rack solution supporting NVIDIA HGX B200 GPUs with 5th/4th Gen Intel® Xeon® Scalable processors, designed for demanding AI training and inference workloads. - GB300 NVL72 AI Factory Platform

GIGABYTE will also highlight the rack-scale GB300 NVL72 platform, integrating 72 Blackwell Ultra GPUs and 36 Grace CPUs with liquid cooling to deliver massive performance for hyperscale AI deployments.

Redefining High-Density Compute with AMD EPYC

Complementing its GPU-accelerated platforms, GIGABYTE will also demonstrate high-density server solutions powered by AMD EPYC processors:

- B683-Z80-LAS1 - A 6U blade server with full-system direct liquid cooling, designed for massively deployed AI hosting environments

- B343-C40 - A 3U 10-node air-cooled system powered by AMD EPYC 4005 Series processors, optimized for scalable cloud and hosting services

These systems enable cloud providers to maximize compute density, improve energy efficiency, and streamline large-scale deployments.

Keynote Presentation

GIGABYTE will present a keynote session addressing next-generation infrastructure design:

Innovation for 800G-Ready POD Design in Container DC

- Date: March 26

- Time: 11:55-12:05

- Location: Studio Stage

AI Infrastructure Tour

Attendees can also visit GIGABYTE during the AI Infrastructure & Accelerated Compute Tour, highlighting key technologies enabling AI-driven cloud services.

Innovation for 800G-Ready POD Design in Container DC

- Date: March 24

- Time: 15:25-16:10

- Meeting Point: WebPros Cloud Pavilion, Registration Point

The tour will focus on the hardware backbone required for AI workloads, including compute, memory, storage, cooling, networking, and datacenter engineering solutions.

Attendees are invited to visit Booth E03 at CloudFest 2026 to experience GIGABYTE’s AI infrastructure innovations across edge devices, enterprise AI, and datacenter-scale deployments.

For queries or more information, please contact sales.