1/2

NVIDIA GB200 NVL4

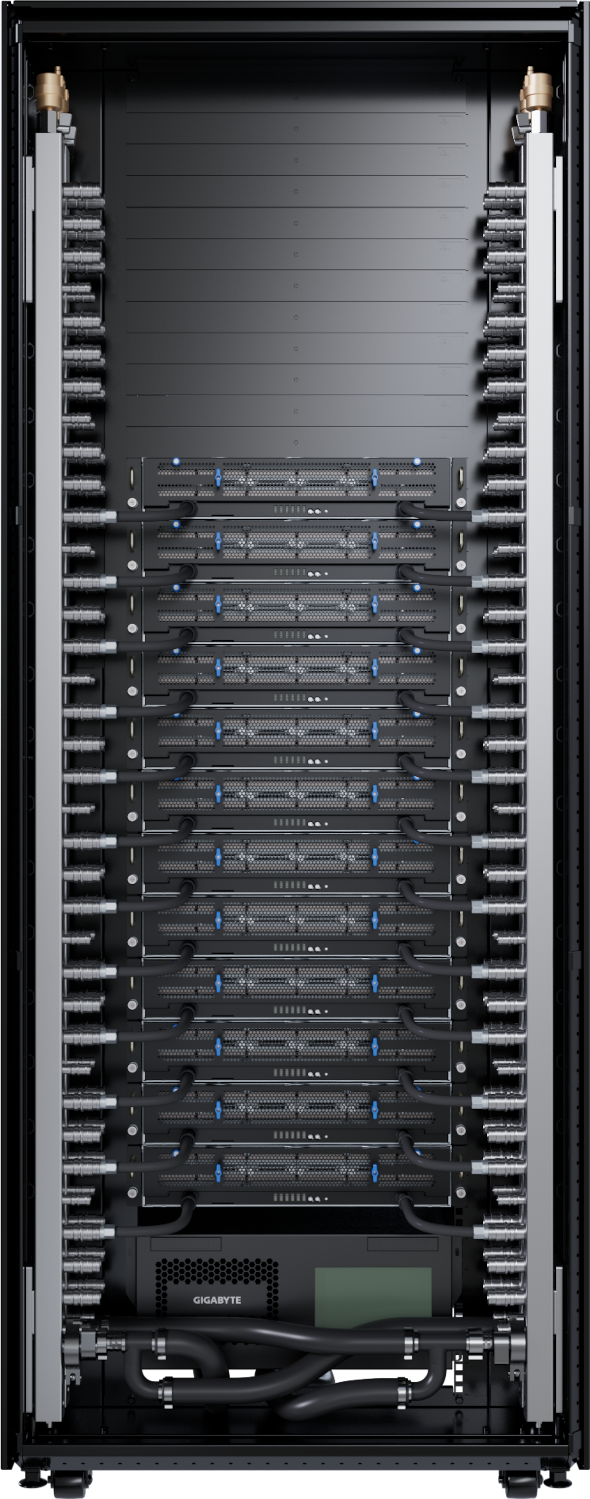

GIGAPOD AI DLC Rack Scale Solution

- Built on the NVIDIA GB200 NVL4 platform

- 48 x NVIDIA Blackwell GPUs and 24 x NVIDIA Grace™ CPUs

- 11.5 TB of LPDDR5X ECC memory

- 8.9 TB HBM3E GPU memory

- Integrated NVIDIA ConnectX®-8 SuperNIC™

- Up to 80 kW rack power consumption

- Reserved space for InfiniBand / Ethernet switches

- Integrated G-REX cluster-level DLC power and cooling management kit

- Compatible with GIGABYTE POD Manager software for cluster management / orchestration

- Supports flexible storage with GIGABYTE storage servers and validated partner solutions

Configuration

| DL83-MG0 | |

|---|---|

| Cooling Type | Direct Liquid Cooling |

| Rack Height | 42U |

| Server Model | |

| Server Configuration | 12 x 2U DLC GPU servers |

| CPU | 24 x NVIDIA Grace™ CPUs |

| GPU | 48 x NVIDIA Blackwell GPUs |

| Switch | Flexible validated InfiniBand / Ethernet switch configurations |

| PDU | 4 x PDUs Input: 415 V, 100 A, 3-phase |

| Rack Power Consumption | Up to 80 kW |

| CDU | In-Rack Liquid-to-Liquid CDU Cooling Capacity: 100 kW (5°C approach temperature) Primary Loop Connections: 1" FD83 QDC couplings (inlet/outlet) Input Power: 2 x C20, AC 100-240V , 50/60 Hz 1+1 redundant power supplies and pumps |

| Management | Integrated G-REX DLC power and cooling management kit: - 1 x N120-LS0 management node - Real-time cluster-level monitoring and control - Supports Modbus, Redfish, and REST APIs for seamless integration with CDUs and RDHx Optional GIGABYTE POD Management (GPM) software suite: - Essential single-node BMC for real-time hardware health and remote system oversight - Unified cluster-scale management and deep telemetry for high-density deployments - Total POD-level orchestration for infrastructure provisioning and environmental monitoring |

| Storage Solutions | Flexible validated partner solutions with GIGABYTE storage servers |

| Cooling Type |

| Rack Height |

| Server Model |

| Server Configuration |

| CPU |

| GPU |

| Switch |

| PDU |

| Rack Power Consumption |

| CDU |

| Management |

| Storage Solutions |

[#1] All materials provided herein are for reference only. GIGABYTE reserves the right to modify or revise the content at any time without prior notice.

[#2] Advertised performance is based on maximum theoretical values as specified by the respective chipset vendors or standards organizations. Actual performance may vary depending on system configuration.

[#3] All trademarks and logos are the property of their respective owners.