Unleash a Turnkey AI Data Center with High Performance GPU Compute

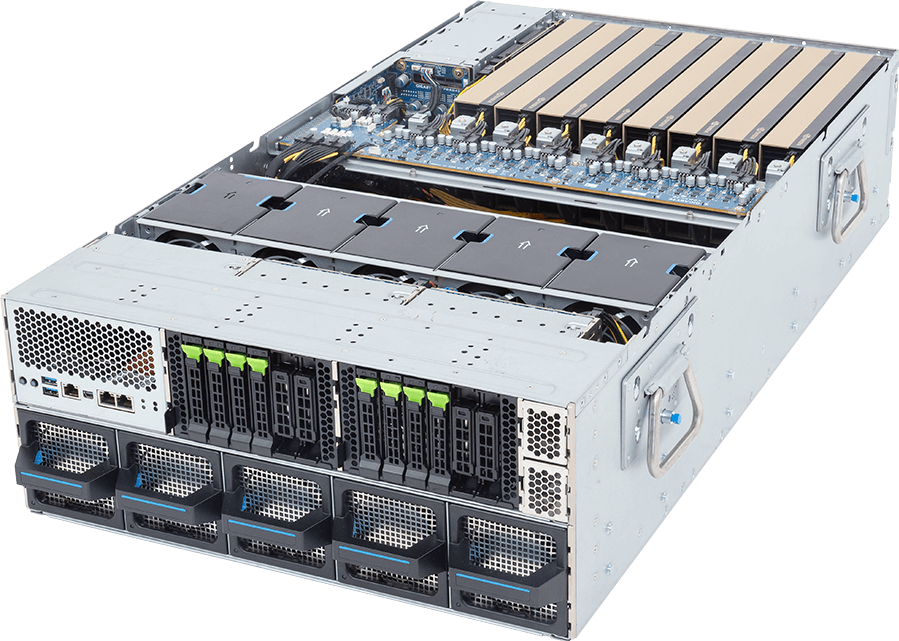

GIGABYTE has been pivotal in providing technology leaders with supercomputing infrastructure built on high-performance GPU servers. Our solutions are accelerated by the latest NVIDIA, AMD Instinct, and Intel Gaudi 3 accelerator platforms. GIGAPOD is a service that provides professional support to create a cluster of racks all interconnected as a cohesive unit. An AI ecosystem platform thrives on a high degree of parallel processing, with each GPU server optimized for high-speed internal communication using technologies such as NVIDIA NVLink™ or AMD Infinity Fabric™, while scalable inter-rack connectivity enables coordinated performance across the entire cluster.

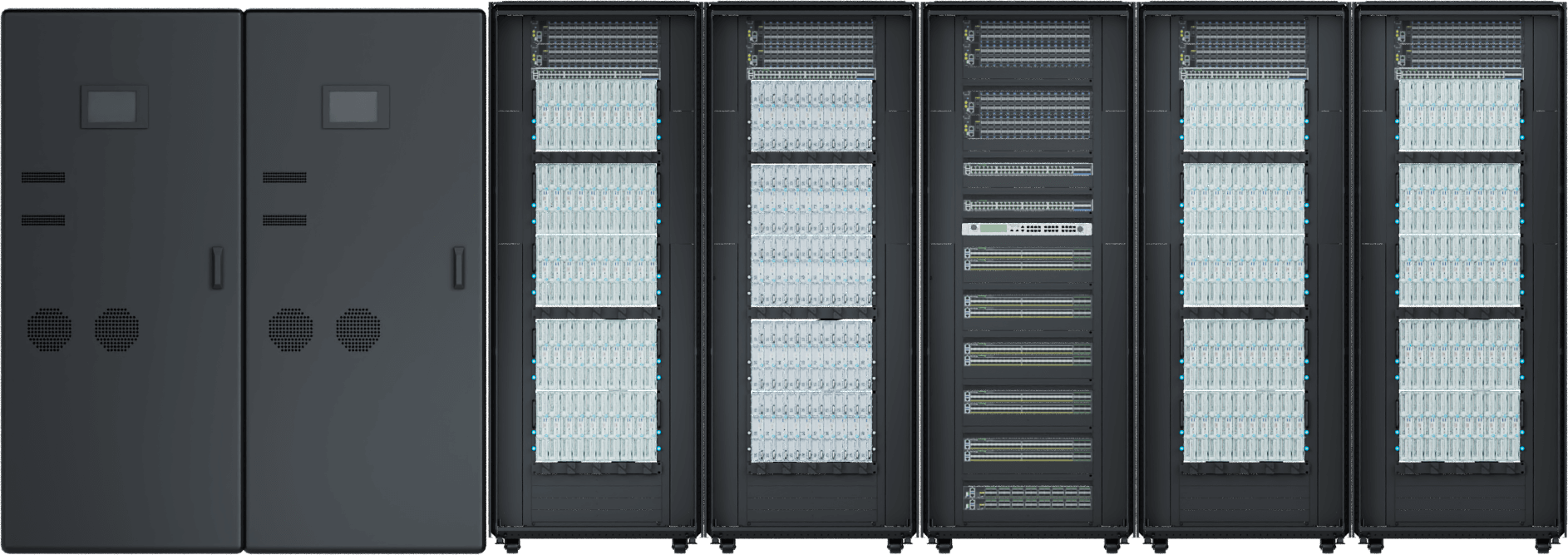

800G-Ready Enterprise AI Data Center Cluster

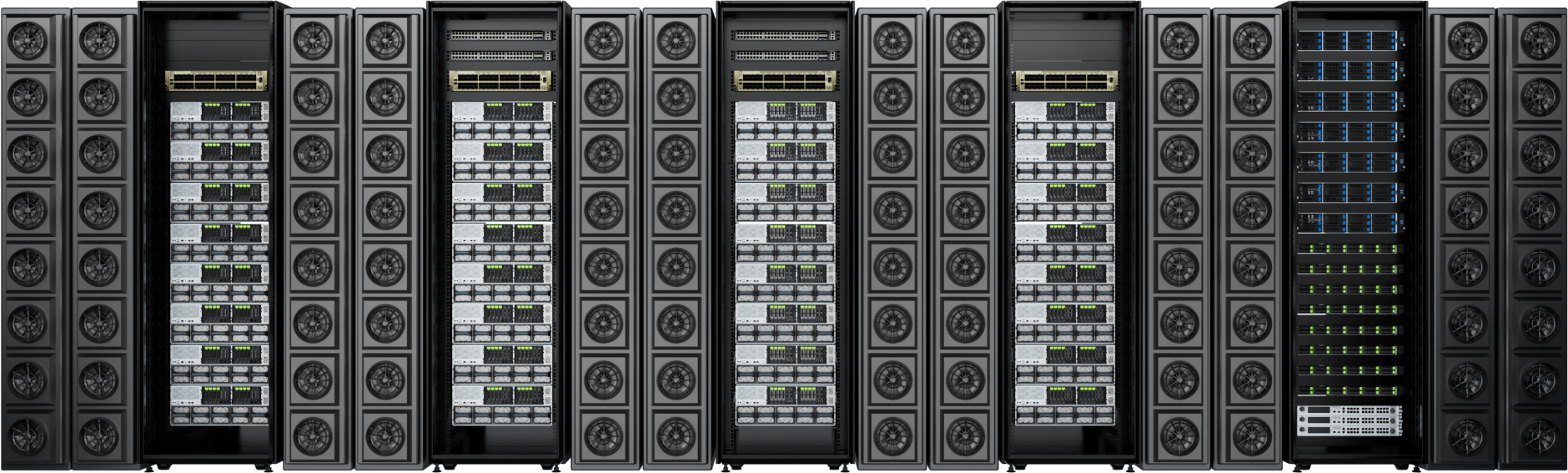

GIGAPOD AI: Complete GPU Ecosystem that Offers the Foundation for AI Infrastructure

GIGABYTE provides a comprehensive, rack-scale ecosystem that moves beyond individual servers to offer fully integrated solutions. Whether for massive AI factories or the burgeoning age of AI reasoning, GIGAPOD ensures that your choice of technology is backed by extreme performance and optimized thermal efficiency.

The GIGAPOD total solution is rounded out with specialized management servers for infrastructure oversight and control, as well as the proprietary GPM software suite for DCIM, workload orchestration, MLOps, and more.

POD for AI Training & AI Factories

Air CoolingLiquid Cooling

Supported GPUs | Server Height | Power Consumption per Rack | No. of Racks per SU* | PDU per Rack |

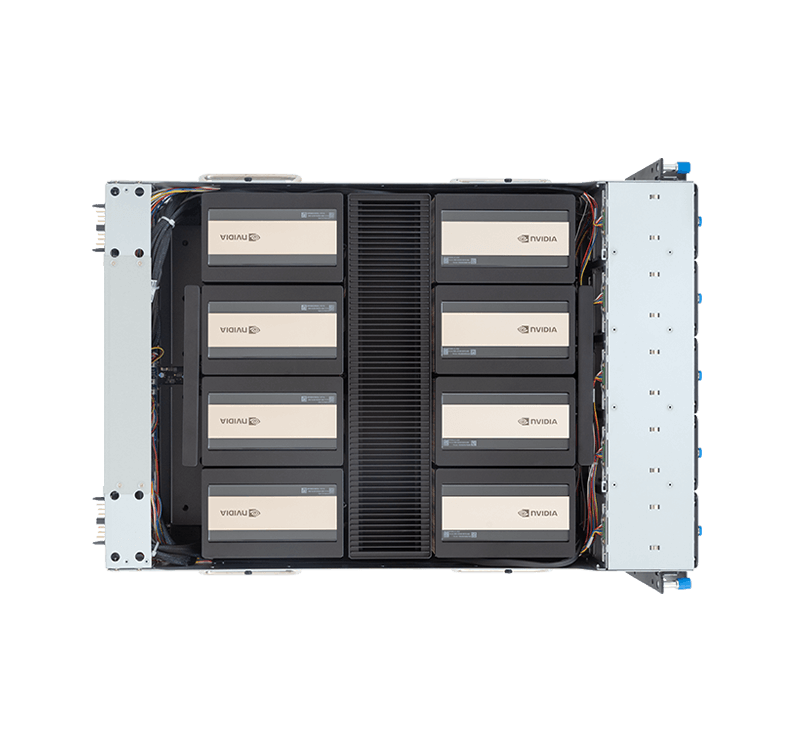

| NVIDIA HGX™ B300 | 8U | 70 kW / 66 kW | 8 + 2 / 8 + 1 | 8 x 63A / 4 x 63A |

| NVIDIA HGX™ B200 | 8U / 8OU | 70 kW / 54 kW | 8 + 1 | 8 x 63A / 4 x 63A |

| NVIDIA HGX™ H200 | 8U | 58 kW | 8 + 1 | 4 x 63A |

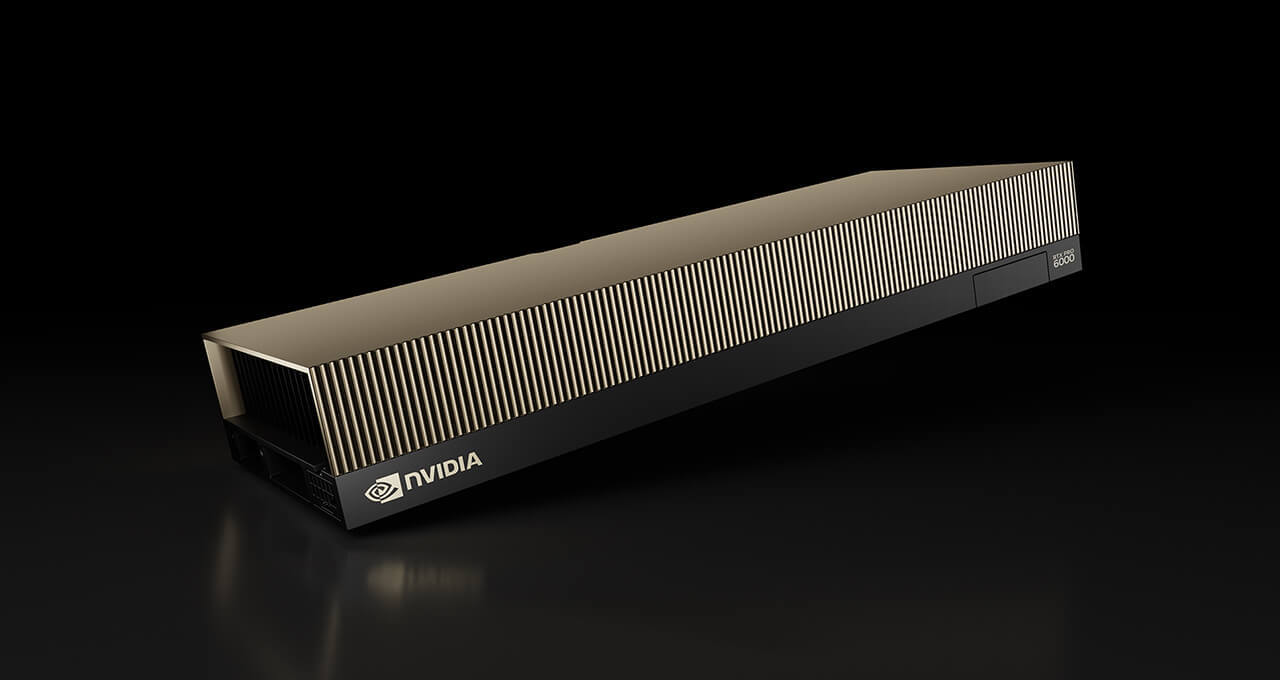

| NVIDIA RTX PRO™ 6000 Blackwell Edition | 4U | 80 kW | 4 + 1 | 4 x 63A |

| AMD Instinct™ MI350 Series | 8U | 70 kW | 8 + 1 | 4 x 63A |

| AMD Instinct™ MI300 Series | 5U / 8U | 50kW - 100kW | 4 + 1 / 8 + 1 | 2 x 100A / 4 x 63A |

| Intel® Gaudi® 3 | 8U | 62kW | 8 + 1 | 4 x 63A |

* # Compute Racks + # Management Rack

Scalable High-Density Supercomputing with Intelligent Scale-Out Management

GIGABYTE is at the forefront of enabling technology leaders with high-performance supercomputing infrastructure built on world-class CPU platforms from AMD and Intel. GIGAPOD is a professional service designed to create a cluster of racks, all interconnected as a cohesive unit and optimized for the most demanding scientific and cloud computing environments.

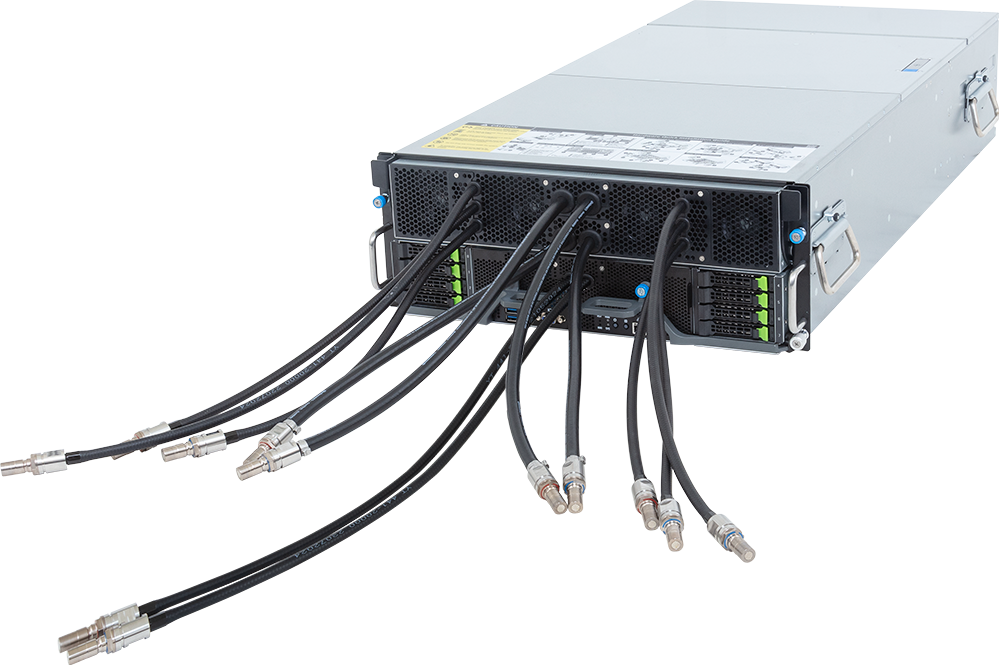

Real-time Monitoring with Intelligent Redfish‑enabled DLC Management

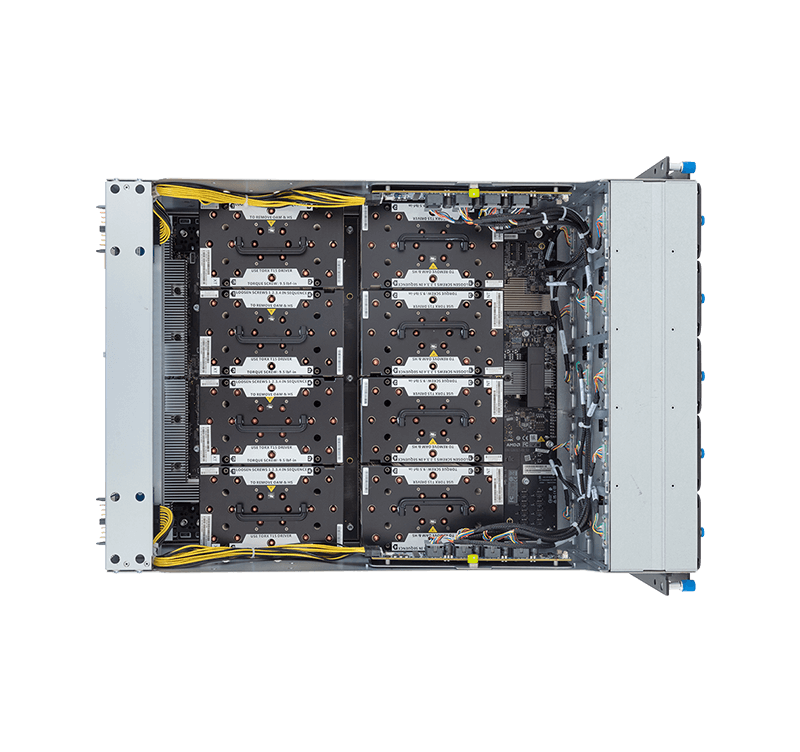

To ensure sustained performance in high-density, multi-node setups, GIGAPOD HPC utilizes a full-system Direct Liquid Cooling (DLC) solution with integrated piping that achieves up to 91% system heat coverage. Validated for up to 105kW per rack, this thermal architecture removes heat directly at the source, eliminating performance bottlenecks while significantly improving energy efficiency in compute-intensive environments.

Learn More: GIGABYTE Direct Liquid Cooling Solution

GIGAPOD HPC: Leading CPU Platforms & Technical Foundation

Optimized for the most demanding scientific simulations and engineering analyses, this scalable architecture provides a stable foundation for national research centers and cloud infrastructures. Through multi-node configurations, GIGAPOD HPC leverages modular deployment to ensure rapid time-to-solution while maintaining peak operational efficiency.

The GIGAPOD ecosystem is complemented by specialized management servers and the proprietary GPM software suite, empowering IT personnel with centralized control over DCIM and workload orchestration for maximum operational efficiency.

POD for Engineering & Research Clusters

Liquid Cooling

Rack Height / No. of Nodes | Supported Systems | No. of Systems per Rack | Power Consumption per Rack | PDU per Rack | CDU |

| 42U / 60 Nodes | H174-A80-LAS1 | 30 | 105kW | 4 x 100A | In-Row In-Rack |

| H274-A81-LAZ1 H274-S61-LAW1 | 15 | ||||

| 42U / 50 Nodes | B683-Z80-LAS1 B684-A80-LAS1 | 5 | 90kW | 4 x 100A | In-Row In-Rack |

Why is GIGAPOD the rack scale service to deploy?

Industry Connections

GIGABYTE works closely with technology partners - AMD, Intel, and NVIDIA - to ensure a fast response to customer requirements and timelines.

Depth in Portfolio

GIGABYTE servers (GPU, Compute, Storage, & High-density) have numerous SKUs that are tailored for all imaginable enterprise applications.

Scale Up or Out

A turnkey high-performing data center has to be built with expansion in mind so new nodes or processors can effectively become integrated.

High Performance

From a single GPU server to a cluster, GIGABYTE has tailored its server and rack design to guarantee peak performance with optional liquid cooling.

Experienced

GIGABYTE has experience successfully deploying large GPU clusters and is ready to discuss the process and provide a timeline that fulfills customer requirements.

Discover GIGAPOD

From one GIGABYTE GPU server to multi-rack configuration, GIGAPOD has the infrastructure to scale, achieving a high-performance supercomputer. Cutting-edge data centers are deploying AI factories, and it all starts with a GIGABYTE GPU server.

GIGAPOD is more than just a bunch of GPU servers, the complete solution offers hardware, software, and services to deploy with ease.

GIGABYTE POD Manager (GPM)

Integrated with GIGABYTE POD Manager (GPM), our proprietary infrastructure and workflow management platform, GIGAPOD streamlines AI and HPC operations with real-time monitoring, orchestration, and automation—delivering complete data center solutions from hardware to cluster management.

Learn More: Simplify POD Management Tools

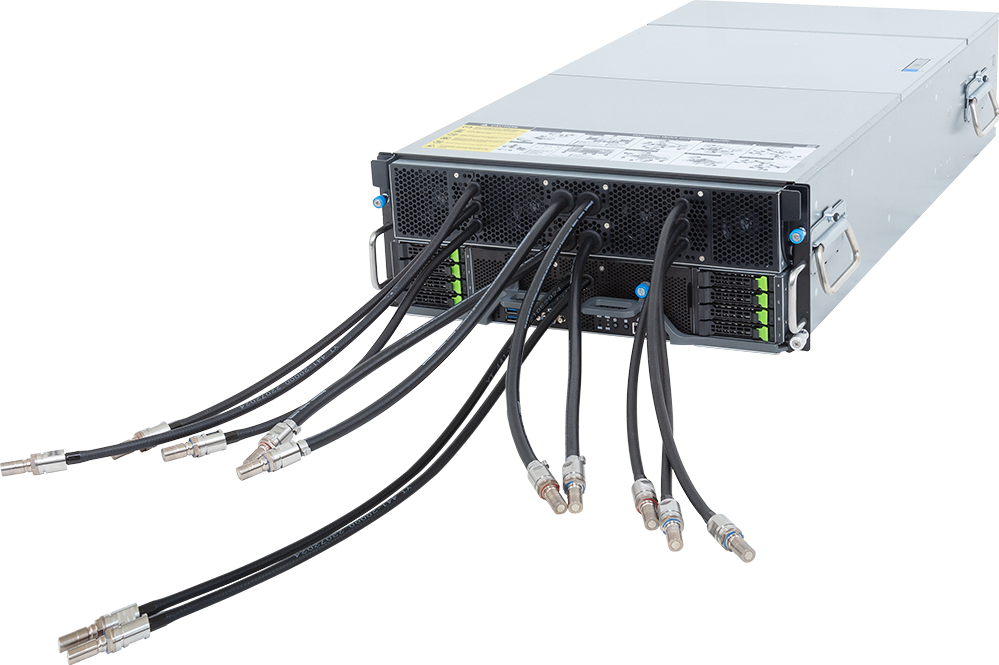

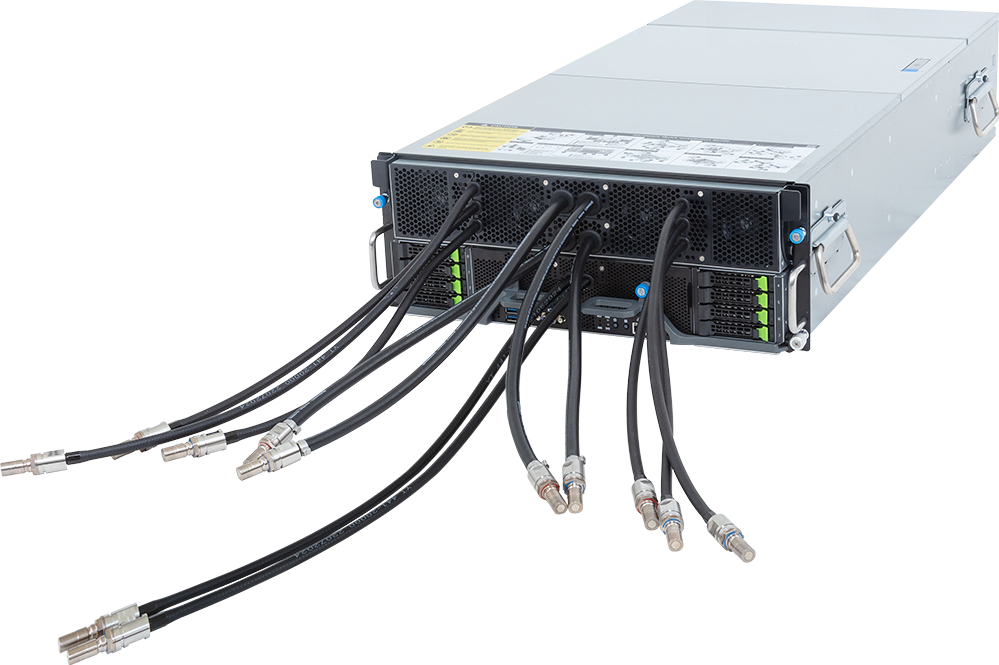

GIGAPOD: Maximizing Compute Density with Advanced DLC Technology

Experience the power of GIGAPOD, our scalable high-density cluster featuring industry-leading 92% liquid-cooling heat capture coverage. Designed for a minimum footprint with an impressive 1.1 PUE, it integrates Redfish-enabled intelligent management for real-time monitoring and proactive leak detection across all 1U, 2U, and 6U configurations.

Learn More: GIGABYTE Direct Liquid Cooling Solution

Strong Top to Bottom Ecosystem for Success

This comprehensive enterprise ecosystem provides the necessary expertise, resources, innovation, and interoperability to build, deploy, and maintain cutting-edge, large-scale AI infrastructure. GIGABYTE continues to expand its partners who are leaders in their respective fields, to ensure that rack-scale projects don’t get stuck at any phase. Customers will quickly reap the fruits of their investment through a developed and evolving ecosystem of partners.

ComputeNetworkingStorageCoolingData Center Construction

FAQ

How do I know which GIGAPOD to choose from?

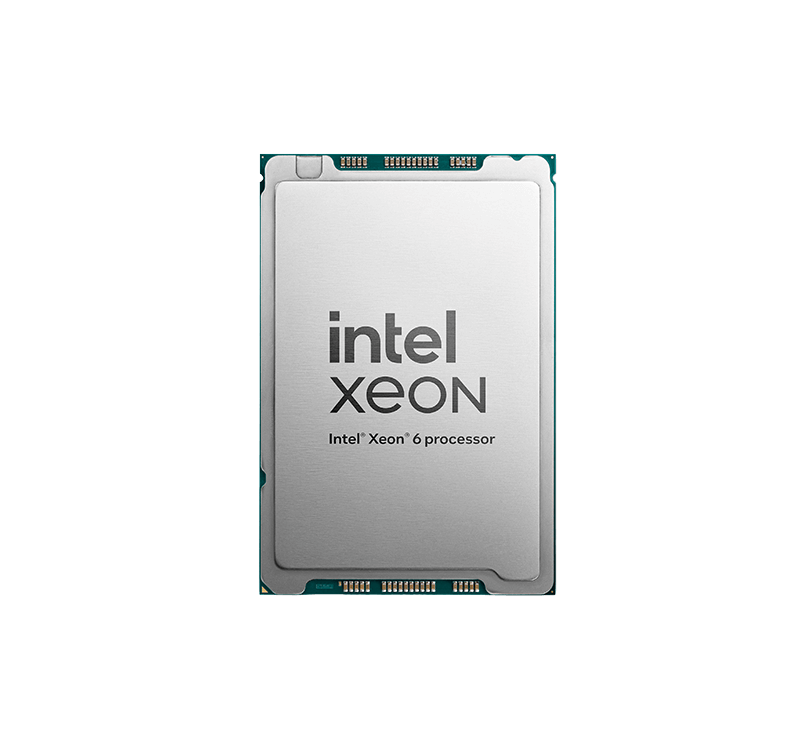

The ideal GIGAPOD configuration depends primarily on your specific workload and existing infrastructure goals. For organizations focused on the frontier of artificial intelligence, GIGAPOD AI is our flagship solution for large-scale model training and real-time reasoning, supporting the latest accelerators for peak performance and efficiency. If your focus is on research-intensive environments, GIGAPOD HPC provides a scalable architecture optimized for scientific simulations and engineering analysis, prioritizing CPU compute density with AMD EPYC™ or Intel® Xeon® platforms.

To provide a truly comprehensive data center ecosystem, we are also expanding our lineup with specialized solutions. GIGAPOD Edge, designed for localized inference and enterprise R&D, and GIGAPOD Storage, focused on high-density data infrastructure, are coming soon. If you are unsure which configuration fits your current deployment stage, contact our Giga Computing experts to help tailor the ideal solution for you.

What's the difference between air-cooled and liquid-cooled GIGAPOD?

The primary distinction between these two configurations lies in their thermal efficiency and the resulting compute density they can support within a single rack.

・Liquid-Cooled GIGAPOD: Uses Direct Liquid Cooling (DLC) to manage the high TDP of next-generation chips. By removing heat directly at the source, this solution enables much higher rack density and superior Power Usage Effectiveness (PUE), ensuring that your cluster maintains sustained peak performance without the risk of thermal throttling.

・Air-Cooled GIGAPOD: Optimized for traditional data center environments that use conventional airflow and do not yet have liquid cooling infrastructure. While it supports a lower power density compared to its liquid-cooled counterpart, it is engineered for maximum reliability and ease of integration into existing facilities, providing a robust path for organizations to scale their compute capabilities within traditional infrastructure constraints.

Can GIGAPOD be customized for specific data center standards?

Absolutely. GIGAPOD is engineered to be a flexible foundation that adapts to your existing data center architecture. We offer a high degree of integration flexibility to meet both global standards and facility-specific constraints:

・Standard & Framework Compliance: We fully support EIA as well as Open Rack V3 (ORV3) standards, ensuring seamless integration for hyperscale and standardized cloud environments.

・Electrical & Power Tailoring: For facilities with unique power densities, we support Modular Busway systems. This allows superior heat dissipation and plug-and-play power scaling tailored to your specific rack power limits.

・Infrastructure Adaptation: GIGABYTE provides bespoke DLC and piping designs to match your physical layout, whether you require overhead or underfloor routing, ensuring a perfect fit with your existing chilled water loops.

Does every GIGAPOD include a management node for leak detection?

Yes. Every GIGAPOD includes a dedicated intelligent DLC management tool (Redfish-enabled) providing real-time monitoring as an integral part of the turnkey solution. This management node is engineered to provide a robust safety layer and centralized infrastructure oversight. While the management system serves as a primary defense for leak detection and prevention, its capabilities also include real-time performance monitoring for Coolant Distribution Units (CDUs), PDUs, and Rear Door Heat Exchangers (RDHx).

This integration ensures that power distribution and thermal management are always synchronized and operating at peak efficiency. By utilizing these management nodes, GIGAPOD ensures that high-density clusters remain operationally secure by proactively identifying and addressing liquid-related risks before they impact critical hardware.