All Data Can Be AI Value

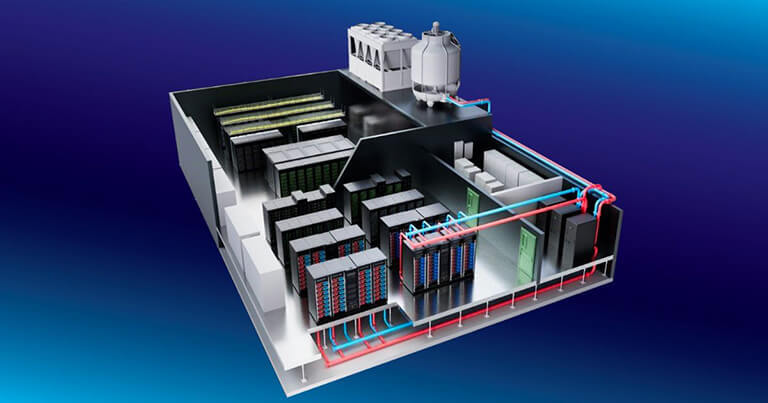

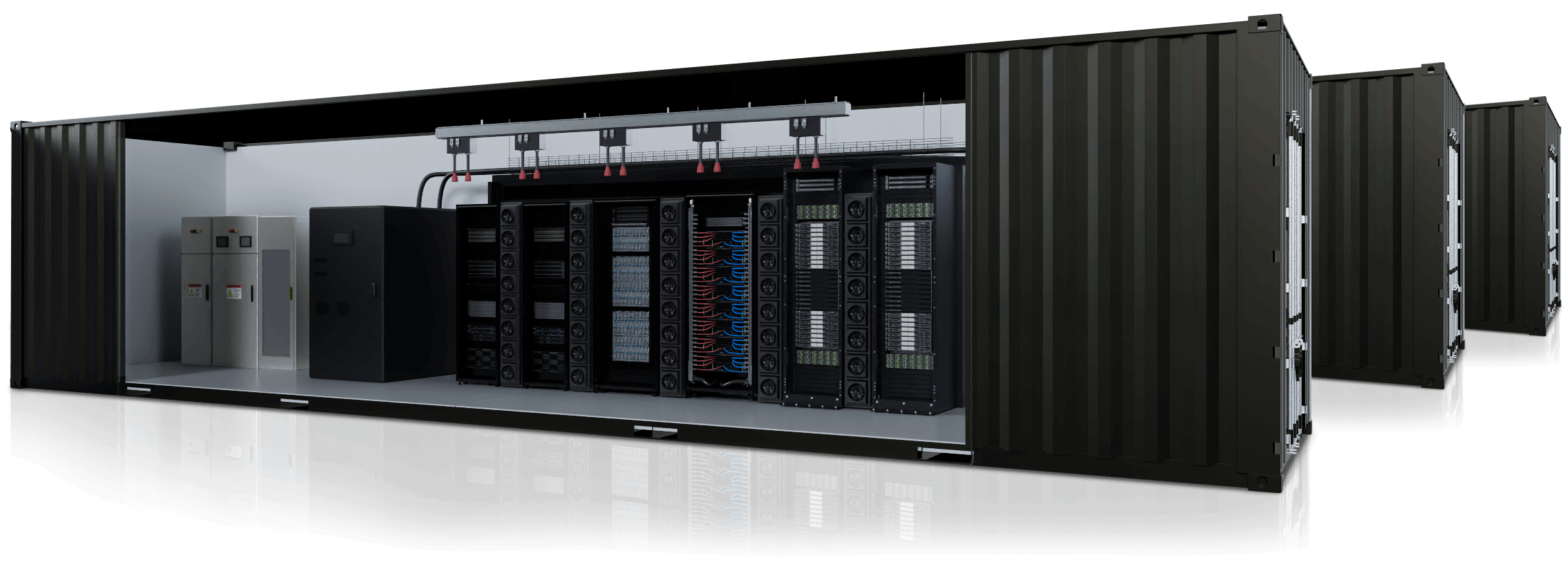

AI factories are a type of IT infrastructure that transforms organizational data into AI inventions and propels industry leaders ahead of the competition. GIGABYTE Technology, an end-to-end data center infrastructure and AI solution provider, can help you establish your AI factory. Our time-tested, world-renowned hardware and software portfolio can turn data pipelines into round-the-clock generators of smart value. GIGABYTE AI Factory Accelerator (GAIFA)—our self-designed, self-built, Taiwan-based AI factory—can expedite testing, validation, and deployment, putting you on track to enjoy unprecedented AI success.

.

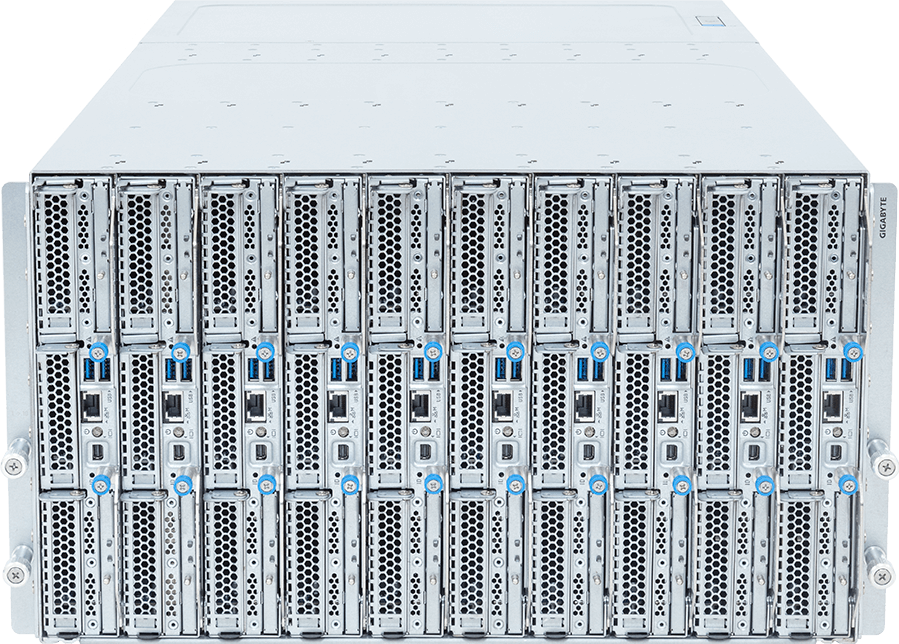

Develop Your AI Foundation with GIGAPOD

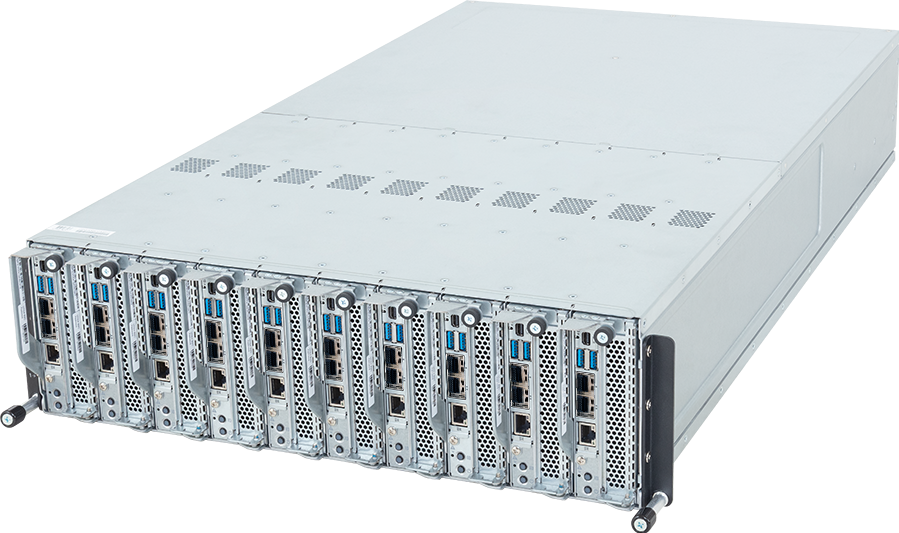

At the core of the AI factory, scalable supercomputing clusters convert big data into AI tokens at breakneck speed by utilizing GPU-centric configurations designed for deep learning and other AI training methodologies. GIGABYTE's GIGAPOD combines multiple arrays of state-of-the-art GPUs through blazing-fast interconnect technology to form a cohesive unit that serves as the building block of modern AI. Clients can not only opt for AMD Instinct™, Intel® Gaudi®, or NVIDIA HGX™ GPU modules, but they can also choose between air cooling and liquid cooling to strike a perfect balance between investment and oomph. GIGAPOD is rounded out with centralized control for infrastructure oversight, the middleware platform G-REX for power and cooling management, proprietary GPM software for DCIM and workload orchestration, as well as pre-integrated single-rack GIGAPOD Rack Scale systems for faster deployment and activation.

Air-CooledLiquid-Cooled

*Compute Racks + Management Rack

| Supported GPUs | Server Height | Power Consumption per Rack | No. of Racks per SU* | PDU per Rack |

|---|---|---|---|---|

| NVIDIA HGX™ B300 | 8U | 70 kW / 66 kW | 8 + 2 / 8 + 1 | 8 x 63A / 4 x 63A |

| NVIDIA HGX™ B200 | 8U / 8OU | 70 kW / 54 kW | 8 + 1 | 8 x 63A / 4 x 63A |

| NVIDIA HGX™ H200 | 8U | 58 kW | 8 + 1 | 4 x 63A |

| NVIDIA RTX PRO™ 6000 Blackwell Edition | 4U | 80 kW | 4 + 1 | 4 x 63A |

| AMD Instinct™ MI350 Series | 8U | 70 kW | 8 + 1 | 4 x 63A |

| AMD Instinct™ MI300 Series | 5U / 8U | 50kW - 100kW | 4 + 1 / 8 + 1 | 2 x 100A / 4 x 63A |

| Intel® Gaudi® 3 | 8U | 62kW | 8 + 1 | 4 x 63A |

.

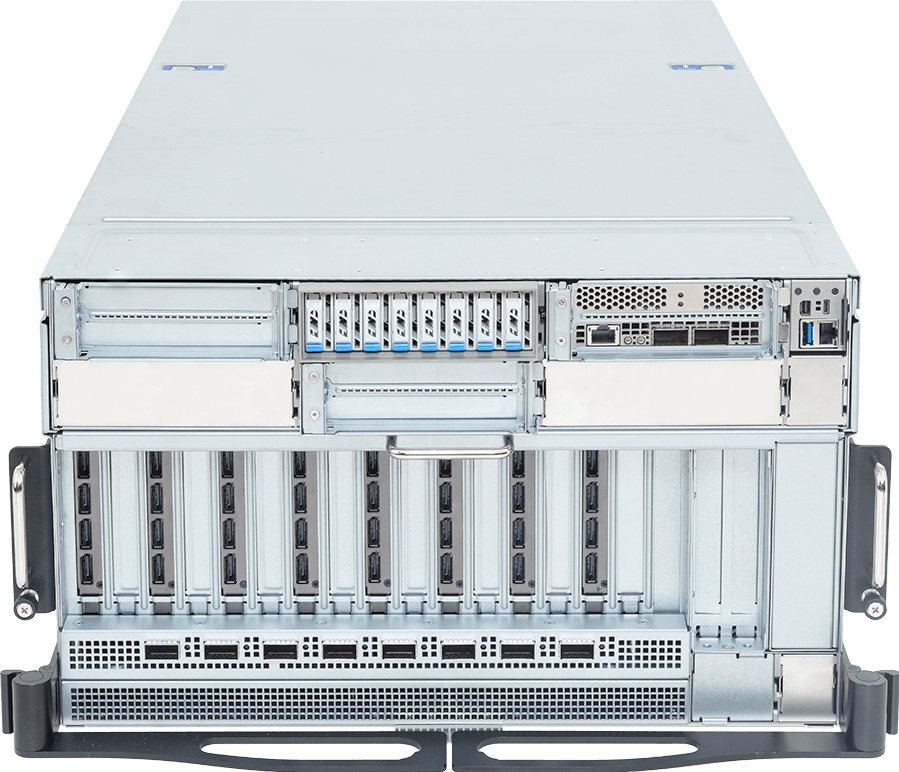

Achieve Reasoning AI with Rubin and Blackwell

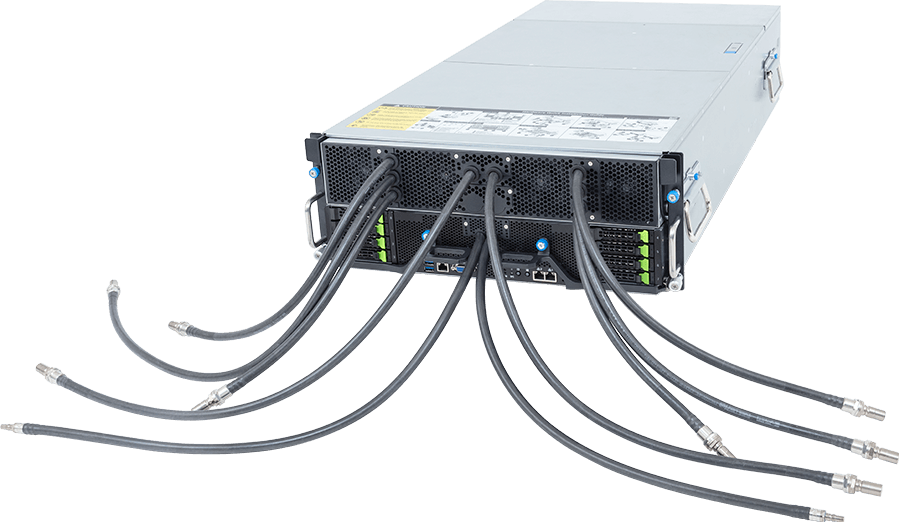

To successfully deploy AI at scale, you need supercomputing that can empower you with agentic and reasoning AI innovations. GIGABYTE works closely with NVIDIA to present Vera Rubin NVL72 and GB300 NVL72—liquid-cooled rack-scale supercomputers that accelerate state-of-the-art CPUs and GPUs with high-throughput communications and extreme compute density.

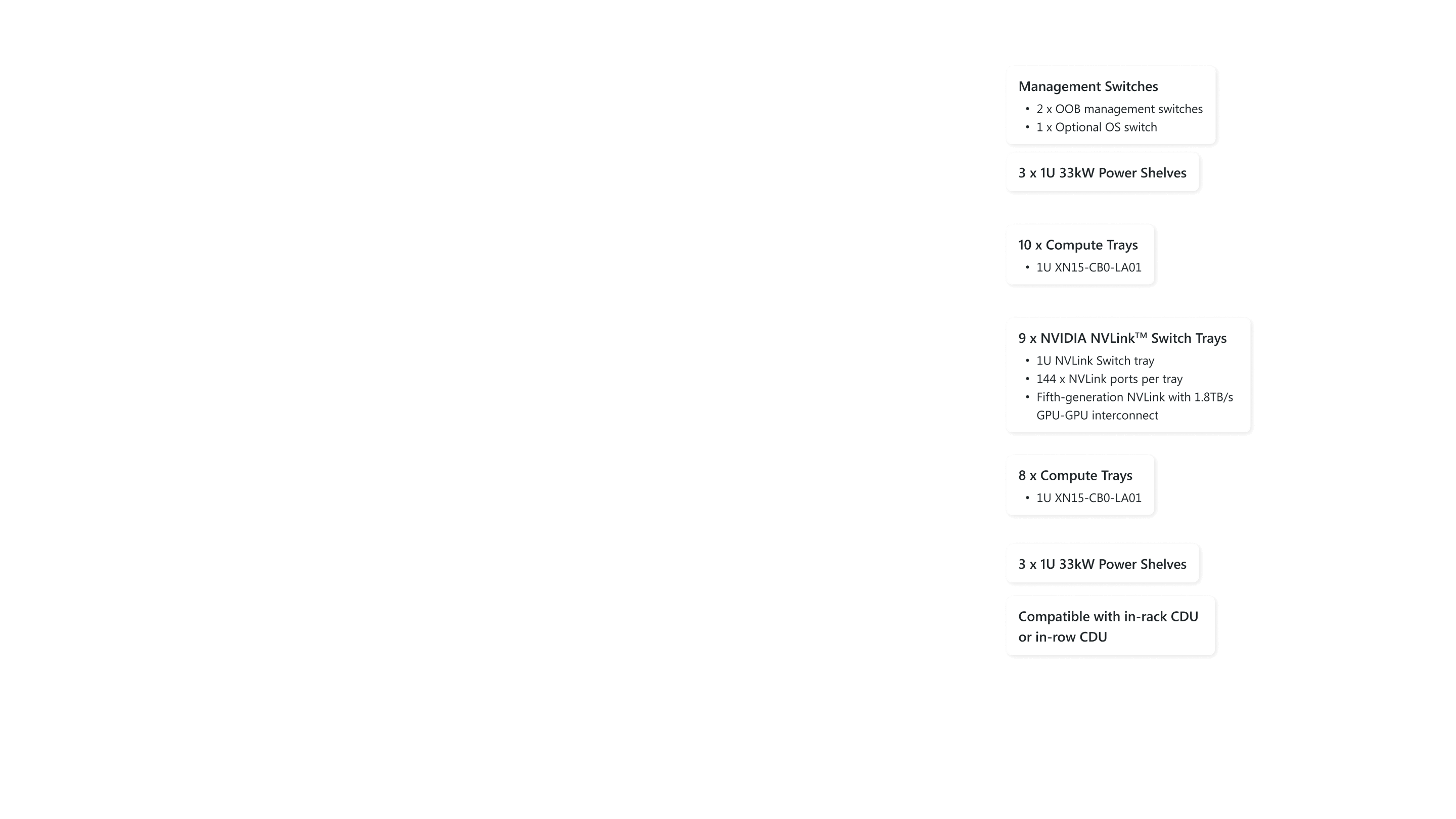

Using GB300 NVL72 (pictured) as an example, 36 Arm®-based NVIDIA Grace™ CPUs and 72 NVIDIA Blackwell Ultra GPUs are interconnected through NVIDIA Quantum-X800 InfiniBand or Spectrum™-X Ethernet paired with NVIDIA ConnectX®-8 SuperNIC™ to overcome AI, HPC, and GPU-driven workloads that make up the foundation of reasoning AI.

Using GB300 NVL72 (pictured) as an example, 36 Arm®-based NVIDIA Grace™ CPUs and 72 NVIDIA Blackwell Ultra GPUs are interconnected through NVIDIA Quantum-X800 InfiniBand or Spectrum™-X Ethernet paired with NVIDIA ConnectX®-8 SuperNIC™ to overcome AI, HPC, and GPU-driven workloads that make up the foundation of reasoning AI.

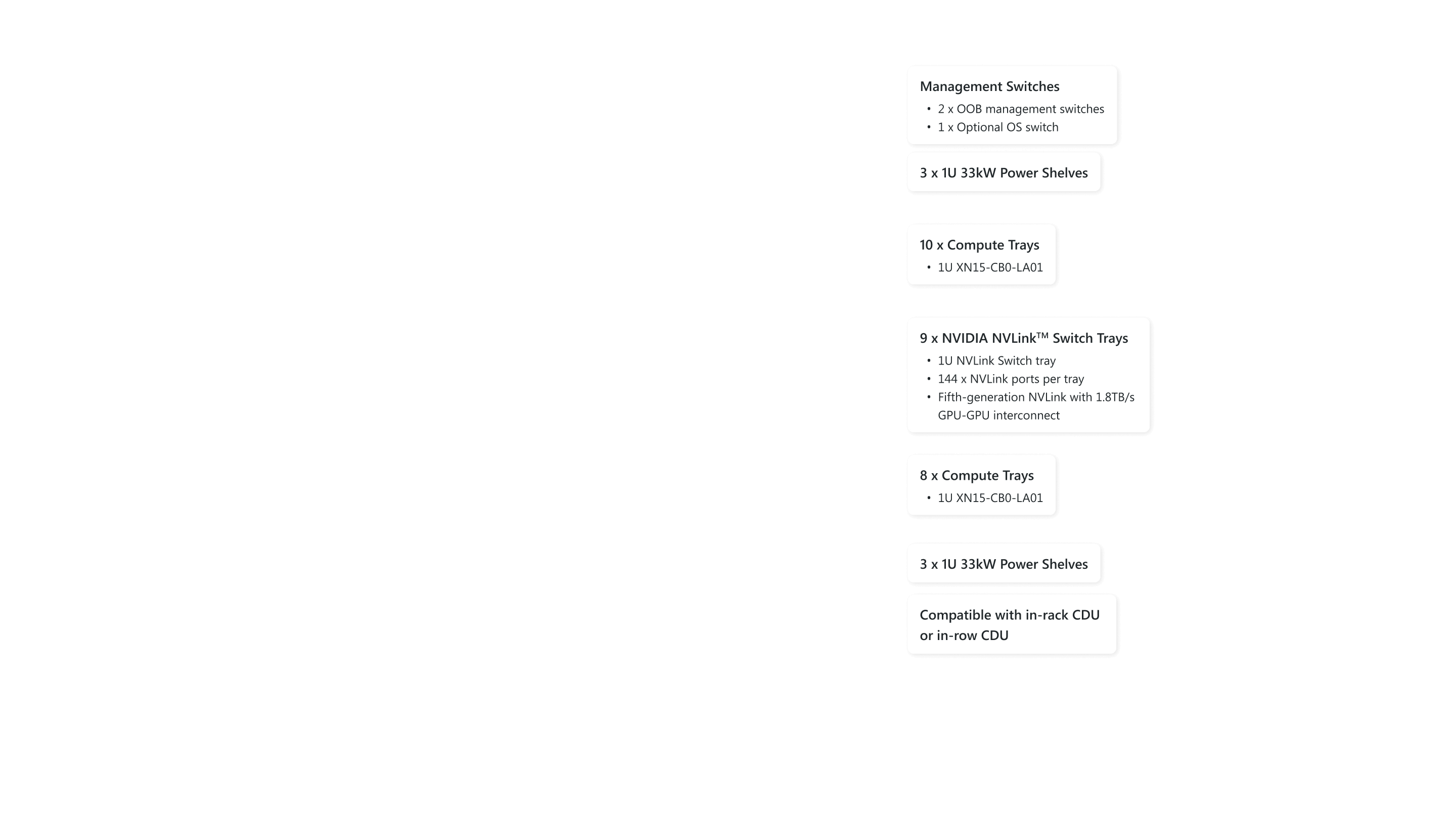

1 Management Switches

- 2 x OOB management switches

- 1 x Optional OS switch

2 3 x 1U 33kW Power Shelves

3 10 x Compute Trays

- 1U XN15-CB0-LA01

4 9 x NVIDIA NVLinkTM Switch Trays

- 1U NVLink Switch tray

- 144 x NVLink ports per tray

- Fifth-generation NVLink with 1.8TB/s GPU-GPU interconnect

5 8 x Compute Trays

- 1U XN15-CB0-LA01

6 3 x 1U 33kW Power Shelves

7 Compatible with in-rack CDU or in-row CDU

Fast Memory

60X

vs. NVIDIA HGX H100

HBM Bandwidth

20X

vs. NVIDIA HGX H100

Networking Bandwidth

18X

vs. NVIDIA HGX H100

XN15-CB0-LA01 Compute Tray

- 2 x NVIDIA GB300 Grace™ Blackwell Ultra Superchip

- 4 x 279GB HBM3E GPU memory

- 2 x 480GB LPDDR5X CPU memory

- 8 x E1.S Gen5 NVMe drive bays

- 4 x NVIDIA ConnectX®-8 SuperNIC™ 800Gb/s OSFP ports

- 1 x NVIDIA® BlueField®-3 DPUs

Inference at Scale with NVIDIA GB300 NVL72

The next step after developing your AI models is to build applications and tools based on said models and deploy them throughout your organization, where hundreds or even thousands of users might access them at any given moment. You need a superscale inference platform capable of handling a multitude of requests simultaneously, so that your AI success can supercharge productivity. NVIDIA GB300 NVL72 features a fully liquid-cooled, rack-scale design that unifies 72 NVIDIA Blackwell Ultra GPUs and 36 Arm®-based NVIDIA Grace™ CPUs in a single platform optimized for test-time scaling inference. AI factories powered with the GB300 NVL72 using NVIDIA Quantum-X800 InfiniBand or Spectrum™-X Ethernet paired with NVIDIA ConnectX®-8 SuperNIC™ provide a 50x higher output for reasoning model inference compared to the NVIDIA Hopper™ platform, making them the undisputed leader in AI inference.

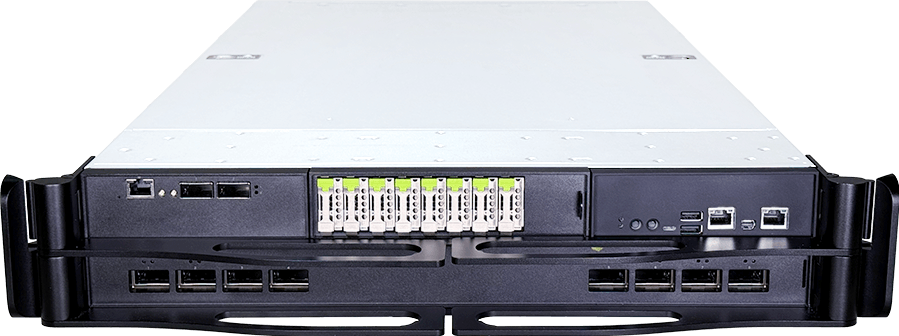

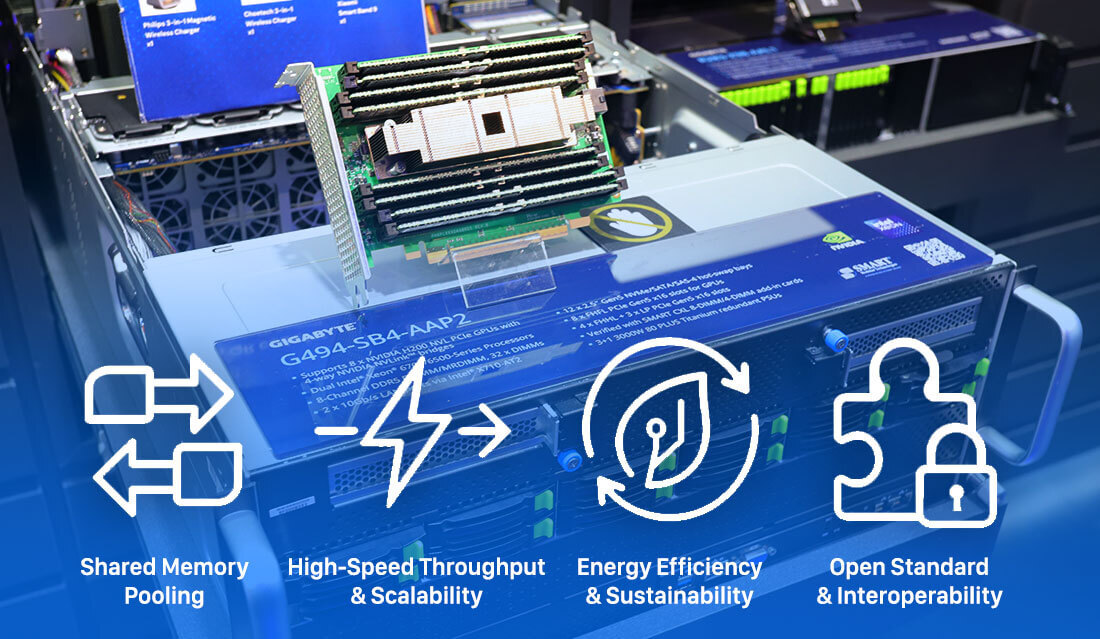

XL44-SX2-AAS1 with RTX PRO™ 6000 Blackwell Server Edition GPUs

- NVIDIA RTX PRO™ server with ConnectX®-8 SuperNIC switch

- Configured with 8 x NVIDIA RTX PRO™ 6000 Blackwell Server Edition GPUs

- Configured with 1 x NVIDIA® BlueField®-3 DPU

- Onboard 400Gb/s InfiniBand/Ethernet QSFP ports with PCIe Gen6 switching for peak GPU-to-GPU performance

- Dual Intel® Xeon® 6700/6500-Series Processors

- 8-Channel DDR5 RDIMM/MRDIMM, 32 x DIMMs

- 2 x 10Gb/s LAN ports via Intel® X710-AT2

- 8 x 2.5" Gen5 NVMe hot-swap bays

- 2 x M.2 slots with PCIe Gen4 x2 interface

- 3+1 3200W 80 PLUS Titanium redundant power supplies