Introduction

The time is now for Virtual Desktop Infrastructure (VDI) using Virtual GPUs (vGPU) to take hold. Workers and devices are scattered, and employees often need to work remotely. This poses all sorts of challenges in terms of security, productivity, user adaptation, and IT management. Also, there is a shift to a greater need for engineers and designers to use 3D applications and other graphics accelerated applications with large amounts of data. In the past, CPU-only VDI environments were deployed to centralize management; however, these solutions could only reach office workers who did not need graphics acceleration, and power users, designers, and more were left out and had to stick with dedicated machines. Now, VDI has reached the point that all users who need graphics acceleration have a solution by combining VDI with vGPU. GIGABYTE has many servers specifically designed for VDI usage for productivity applications, 3D modeling, HPC applications, AI, and more.

Who needs VDI with Virtual GPUs?

Challenges to VDI

Several industries have been quick to adopt VDI, such as Healthcare as they needed greater compliance with regulations, and remote access became a must. However, as companies are pondering the possibility of switching there servers to VDI there are some major challenges ahead.

When we say companies need to adopt new practices we are focusing on the individuals. The switch from a personal device that is always yours to one that is simply logged in can make many users become perturbed. Add this to the fact that not all applications can be virtualized and you have a user that does not want to adopt a new way of using the PC. Convincing and showing employees that in the long term it is worth it must be done. As well, costs upfront are high for VDI architecture. Instead of buying a laptop when a user needs one, all the hardware and setup for VDI must be done before deployment of virtual devices. Last, to deploy virtual devices, IT administrators need to be onboard with the project and require high proficiency in working with servers and may need training. If these challenges can be met companies can reap the benefits of VDI.

Benefits of VDI

User Experience

Resources can better be tailored to user. As well, PC upgrades and repair takes minutes instead of hours.

Productivity & Flexibility

A dedicated, virtualized machine allows users to easily access their work-related resources from anywhere and on any device with internet.

Ease of Management

One central server location to upgrade hardware or software, as well as monitoring and patching, makes IT admins job easier.

Efficiency of Resources

Resources are not underutilized or overprovisioned. Virtual machines have optimal allocation of hardware to fit user.

VDI Architecture Explained

Virtual Machines from a Single Server

The concept of VDI is to use hardware in a server and allocate it to virtual machines (VM). The image on the right visualizes the allocation of resources. And the image below depicts virtualization architecture that is discussed next.

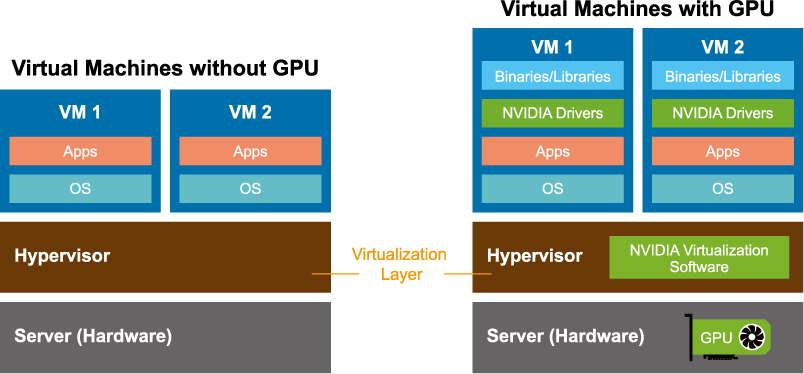

To start, a server is built with typical hardware (CPU, RAM, storage, GPU, network interconnects, etc.) that has been determined for the end users. After hardware installation, a hypervisor (Microsoft Hyper-V, Citrix XenServer, etc.) is installed to abstract the hardware. This hypervisor creates a virtualization layer upon which the VMs are built. The hypervisor that is between the VMs and the hardware contains a Virtual Machine Manager. Through this technology each VM is added with their own operating system and applications. At this point a server has virtualized all but the GPU.

To start, a server is built with typical hardware (CPU, RAM, storage, GPU, network interconnects, etc.) that has been determined for the end users. After hardware installation, a hypervisor (Microsoft Hyper-V, Citrix XenServer, etc.) is installed to abstract the hardware. This hypervisor creates a virtualization layer upon which the VMs are built. The hypervisor that is between the VMs and the hardware contains a Virtual Machine Manager. Through this technology each VM is added with their own operating system and applications. At this point a server has virtualized all but the GPU.

To create a virtual GPU, software such as NVIDIA Virtual GPU Manager installs in the hypervisor. This software coupled with other NVIDIA software (vCS, vDWS, vPC, or vApps) allows customization of the virtual machine. On this vGPU layer are the VMs as before, but now with NVIDIA drivers and binaries/libraries.

Virtualization with and without a Virtual GPU

Determine the GIGABYTE server and NVIDIA Solution

Details below are meant to help one decide on what solution fits best.

・Understand the types of users to select the appropriate NVIDIA Virtual software

・Learn about the different types of NVIDIA GPUs based on specifications and usage

・Choose from the list of GIGABYTE servers that are NVIDIA vGPU certified

・Understand the types of users to select the appropriate NVIDIA Virtual software

・Learn about the different types of NVIDIA GPUs based on specifications and usage

・Choose from the list of GIGABYTE servers that are NVIDIA vGPU certified

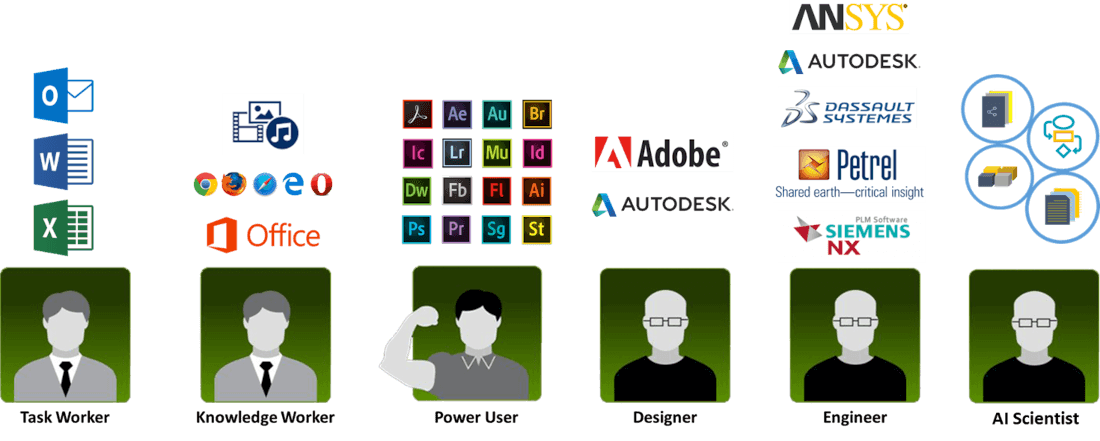

Types of VDI Users

Programs Used by Worker Type

Task Worker:

Basic tasks and do not need graphics acceleration.

NVIDIA Virtual Software: vApps

NVIDIA GPU Used: Turing T4 and

Knowledge Worker:

Entry-level graphics.

NVIDIA Virtual Software: vPC or vDWS

NVIDIA GPU Used: Turing T4 or A16

Power User:

Mid-level graphics to high.

NVIDIA Virtual Software: vDWS or vCS

NVIDIA GPU Used: RTX6000, A10 or Turing T4

Designer, Engineer, AI Scientist:

High-end computing.

NVIDIA Virtual Software: vCS

NVIDIA GPU Used: A100, A30, RTX A6000, or Turing T4

| NVIDIA Accelerators for Virtualized Compute Server Environment | ||||||||

|---|---|---|---|---|---|---|---|---|

| NVIDIA Models | A10 | A16 | A30 | A40 | A100-80G PCIe | RTX A6000 | T4 | |

| Architecture | Ampere | Ampere | Ampere | Ampere | Ampere | Ampere | Turing | |

| CUDA cores | 9216 | 10240 | 3584 | 10752 | 6912 | 10752 | 2560 | |

| Single-Precision | 31.24 TFLOPS | 34.712 TFLOPS | 10.320 TFLOPS | 37.4 TFLOPS | 19.5 TFLOPS | 38.7 TFLOPS | 8.1 TFLOPS | |

| GPU Memory | 24GB GDDR6 | 4x 16 GDDR6 | 24GB HBM2 | 48GB GDDR6 | 80GB HBM2e | 48GB GDDR6 | 16GB GDDR6 | |

| Memory Bandwidth | 600 GB/s | 4x 231.9 GB/s | 933 GB/s | 695.8 GB/s | 1935 GB/s | 768 GB/s | 320 GB/s | |

| Interface | PCIe Gen 4 | PCIe Gen 4 | PCIe Gen 4 | PCIe Gen 4 | PCIe Gen 4 | PCIe Gen 4 | PCIe Gen 3 | |

| Max Power | 150W | 250W | 165W | 300W | 300W | 300W | 70W | |

| Form Factor | single-slot | dual-slot | dual-slot | dual-slot | dual-slot | dual-slot | single-slot | |

| Support NVLink | No | No | No | No | PCIe (NVLink Bridge) | NVLink Bridge | No | |

| Usage | Mainstream graphics and video with AI | VDI applications (support 64 online users) | HPC, AI, data analytics, and mainstream compute | Mainstream visual computing | Ultra-high-end rendering, 3D design, AI(From FP64 to INT4) and data science | High end visual computing | Entry-level to 3D design and engineering, AI (inference), and data science | |

| GIGABYTE Servers (NVIDIA vGPU Certified) | ||||||||

|---|---|---|---|---|---|---|---|---|

| NVIDIA Models | A10 | A16 | A30 | A40 | A100-80G PCIe | RTX A6000 | T4 | |

| 1U G-series | G191-H44 | G191-H44 | G191-H44 | G191-H44 | G191-H44 | G191-H44 | G191-H44, G180-G00 | |

| 1U OCP-series | - | - | - | - | T182-Z70 | - | T181-G23, T181-G24, T181-Z70 | |

| 1U E-series | E152-ZE0 | - | - | - | E162-220 | - | - | |

| 2U G-series | G242-Z11, G291-280, G291-281, G292-2G0, G292-Z24, G292-Z40, G292-Z43, G292-Z44 | G242-Z11, G292-Z24, G292-Z40, G292-Z44 | G291-280, G291-281, G292-Z24, G292-Z40, G292-Z44 | G241-G40, G242-Z11, G292-Z24, G292-Z40, G292-Z44, G292-280 | G292-280, G292-Z40, G292-Z44, G291-Z40, G241-G40, G242-P31, G242-Z11, G291-281, G291-Z40, G242-P32, G292-Z20, G292-Z24 | G242-Z11, G291-280, G292-Z20, G292-Z40, G292-Z44, G292-Z45, G292-280 | G291-280, G291-281, G291-2G0, G291-Z20, G221-Z30, G241-G40, G242-Z10, G242-Z11, G242-P31, G291-Z20, G292-Z20, G292-Z24, G292-Z40, G292-Z42, G292-Z43, G292-Z44, G292-Z22, G292-Z42 | |

| 2U R-series | R282-Z96, R292-4S0 | R262-ZA2, R281-3C2, R282-Z93, R282-Z96, R282-Z9, R262-ZA2 | R262-ZA2, R281-3C2, R282-Z93, R282-Z96, R282-Z9, R262-ZA2, R281-G30 | R262-ZA2, R281-3C2, R282-Z93, R282-Z96, R282-Z9, R262-ZA2, R281-G30 | R282-Z93, R282-G30, R281-3C2, R281-G30, R282-Z93, R282-Z96, R292-4S0, | R292-4S0 | R281-G30, R281-3C2, R282-Z93, R281-T95, R282-G30, R282-Z93, R292-4S0 | |

| 2U E-series | E251-U70 | E251-U70 | E251-U70 | E251-U70 | E251-U70 | - | E251-U70 | |

| 2U H-series | - | - | - | - | - | - | H231-G20 | |

| 4U G-series | G482-Z54, G492-H80, G492-HA0, G492-Z51 | G492-Z51, G492-HA0, G482-Z52, G482-Z54, G481-HA0 | G482-Z54, G492-H80, G492-HA0, G492-Z51 | G492-Z51, G492-HA0, G482-Z52, G482-Z54, G481-HA0, G492-H80 | G482-Z54, G492-Z52, G492-H80, G492-HA0, G481-HA0, G482-Z50, G482-Z52, G482-Z54, G492-Z50, G492-Z51 | G481-HA0, G482-Z53, G482-Z54, G492-H80, G492-HA0, G492-Z51 | G481-H80, G481-HA0, G481-HA1, G482-Z50, G482-Z51, G482-Z54, G492-H80, G492-HA0, G492-Z51 | |